I’ve been doing a lot of rigging and figured I could write about my thoughts.

I started learning 3D modelling and animation via Miku Miku Dance, a ten year old Japanese program. In the West, the community is dominated by teenagers. Any serious documentation is in Japanese, which I neither read nor speak. Rigging tools are limited. The local axes of each bone is set to the global axes in the default pose. There are IK bones and copy rotation/copy translation tools. That’s it.

At first, like everyone probably, I thought about bones as being like bones. That’s the paradigm. It’s a long thing that moves your model. Put them where bones are.

The first time that changed was when I realized that the end points of bones don’t matter. Make a full FK rig without any constraints, move all the tails to some arbitrary position, and animate in global orientation, and the tails are meaningless. So then I stopped thinking about bones as bones and started thinking about them as joints. It’s not the forearm bone, it’s the elbow bone. It’s the center of rotation that matters, not the tail.

When I started rigging in Blender, for a while, I went back to thinking about them as bones, because Blender makes you give them tails, and those tails are actually useful! But that was dumb of me. They’re still not bones. I started to discover this when examining the Pitchipoy facial rig in Rigify.

Bones aren’t anything. They’re groups of deforming vertices. In Blender, there’s an extra little pocket where you can store information, the tail, which is just four little floats. And just like when I was writing shaders and I started to realize I can use textures to store any kind of data I want, I realize there’s no difference between addUV and vertex color, it’s just how you use it-- just like that, I realized that you use that tail vector to store any kind of information you want. It doesn’t matter where the tail points, what matters is what information that tail vector contains, and how you use that information.

But it’s hard to get rid of metaphors all the way. So I still think of bones as something. But I think of them as lumps of flesh.

People will tell you to study anatomy, watch how the femur moves in the socket. But when we watch an animation, we don’t see the femur moving in the socket. We see the flesh that is pulled by that femur, constrained by adjoining flesh. That flesh isn’t anchored to any socket. It maintains its volume. It slides around. It doesn’t rotate around a fixed point like a bone does.

How do oblique muscles move? What point do they rotate? They don’t rotate a point. They connect points.

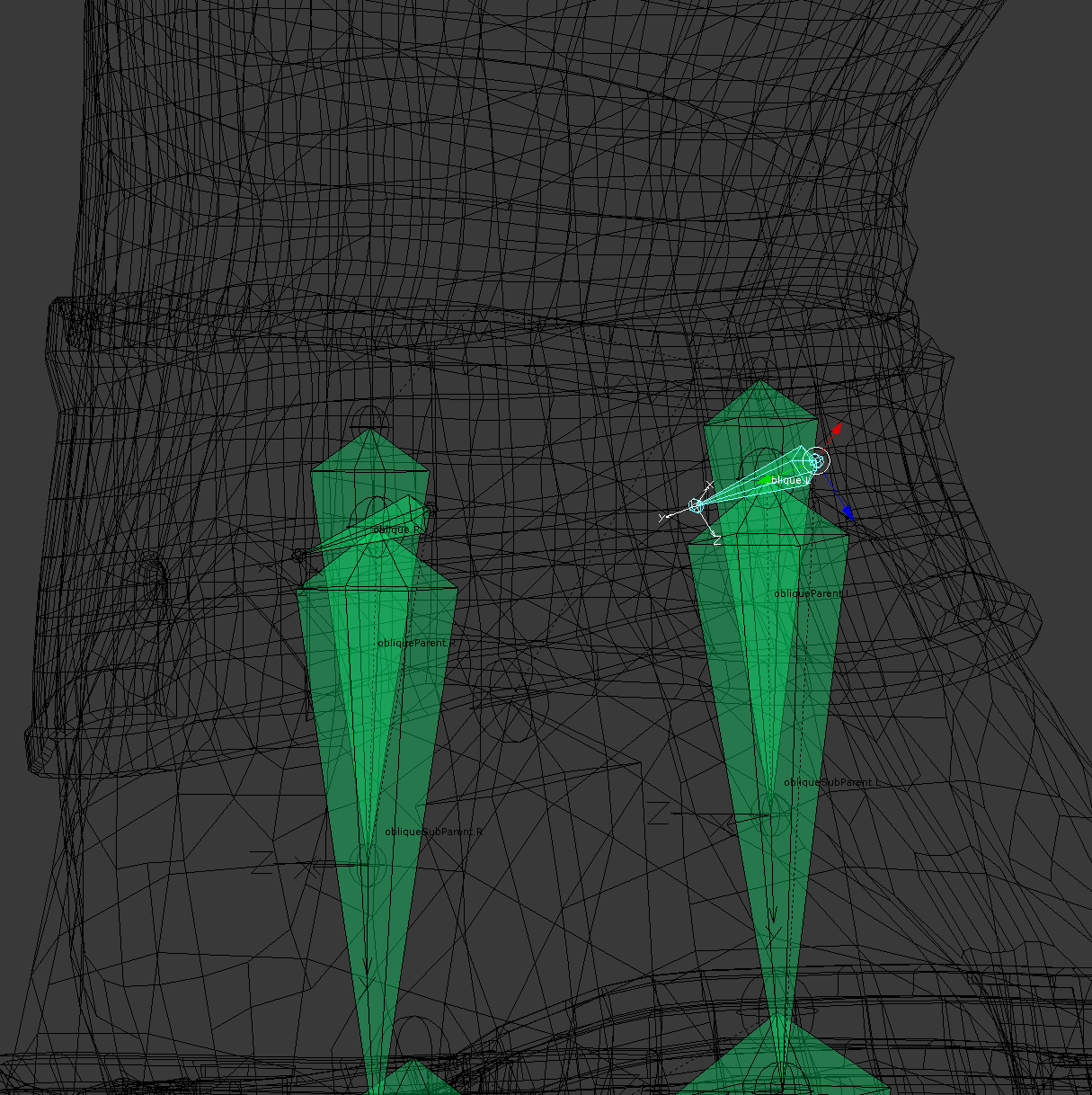

I no longer choose deforming bones for their centers of rotation. Will that selected oblique deformer move in any useful way according to its chosen axis? No. I chose the axis to tell automatic weights the general shape of the lump of flesh I want to animate. That’s how I place deforming bones now.

When I place deformers, I test them out. Scaling is good. Just to see their margins. Then I put a cursor down and start examining different centers of rotation. When I get a good center of rotation, I place a non-deforming bone there-- tail doesn’t matter yet-- and I parent the deformer to it. This means that my model changes shape-- its obliques don’t just rotate, they expand out of the body. Some people tell you not to do that, because they’re stuck thinking about bones as bones. Bones don’t expand out of the body but lumps of flesh do.

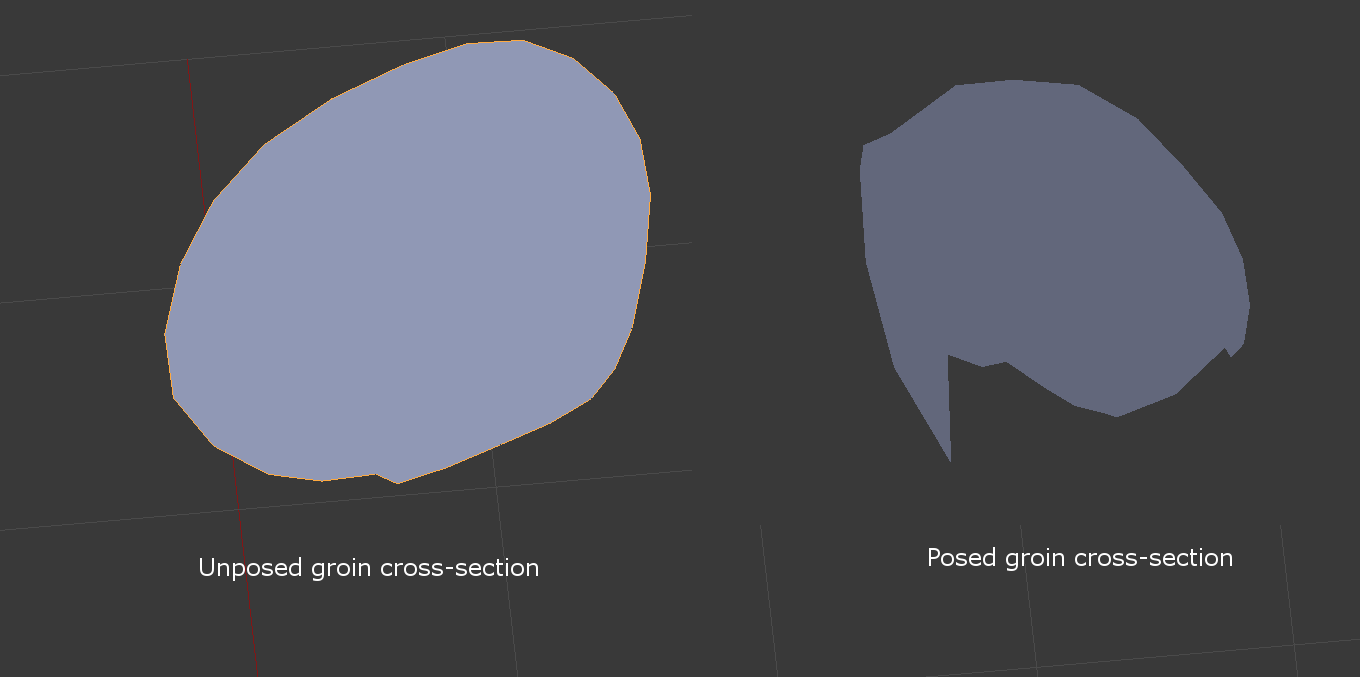

This is the cross-section of the groin->hip from a very high quality model, by a modeller I have supreme respect for, a modeller that has been modelling for decades. It’s not newbie shit. But compare the cross section of the groin in default pose with the cross section of the groin with the leg rotated 90 degrees out in Blender’s Y axis. Legs don’t do that. Cartoon legs don’t even do that. The cross section stays mostly the same in real life. But modellers have mostly thrown up their hands with dealing with this problem.

I read all these people that tell me that rigging is easy, it’s just tedious to do the fingers. Then where is the model that solved this problem? It’s not unsolvable. Then those same people get defensive. They tell me that people can’t do that anyways. Which is not true (just google “splits”) and irrelevant. Because we’re not making real people. Most of the time, we want models that can do MORE than people can. We’re playing with the fantastic.

Like I said, this isn’t an unsolvable problem. But it’s not solvable as long as you’re stuck thinking that bones are bones, when they’re not.

We all know this problem. People try to solve it with weight painting, which doesn’t work very well, they try to solve it with topology, which seems to work well so long as you limit yourself to 1k vert models, they try to solve it with shapekeys, and I don’t know how well that works, I haven’t tried it. It’s solvable with rigging. I’m not doing it perfectly yet though.

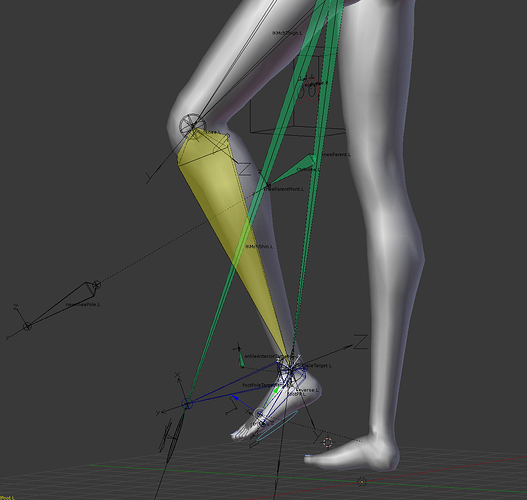

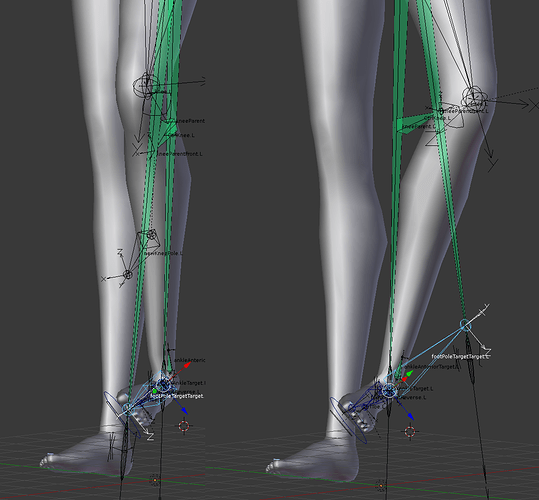

What is the distance between the center about which your elbow rotates, and the inside of your elbow? It is not a fixed number. It depends on the angle. If it doesn’t depend on the angle, then you get the cross-section that squishes, and where did all your flesh go? Did it all squish up into your bicep?

So why not make an inside of the elbow bone? And rotate it about a different center, so that as it rotates, it also moves. Place the bone for good response from automatic weights, rather than for some imagined anatomical reason, and you don’t even have to do any weight painting.

Except you have to animate it. But you don’t, if you store information in the tail of its non-deforming parent. How should the parent point? Figure that out, and then place the tail at that point in the default pose. Its tail is meaningless except to store that extra data that can be used to automate animation.

interesting thread.

interesting thread.