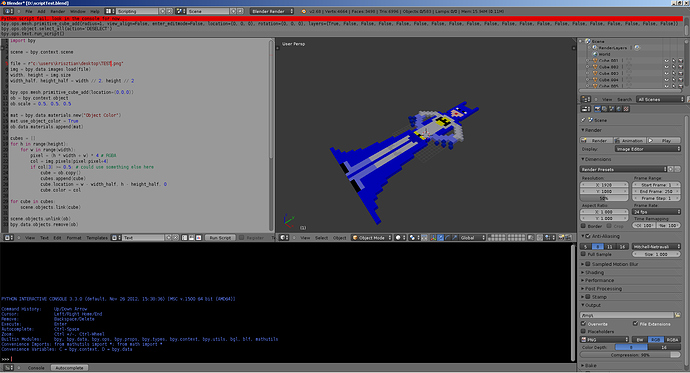

Ok, I have another thread going asking about a plugin to import a 2d image pixel by pixel as cubes? While I wait to see if anything is available, I thought I might try a little coding.

Can someone help me get a basic script going. Where should I start (I don’t need a python lesson, just an organized structure to write the script).

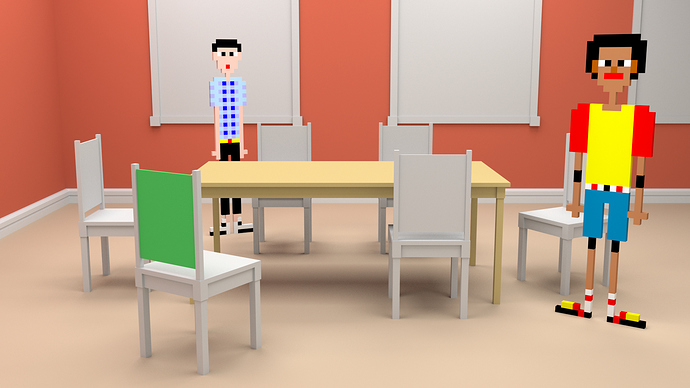

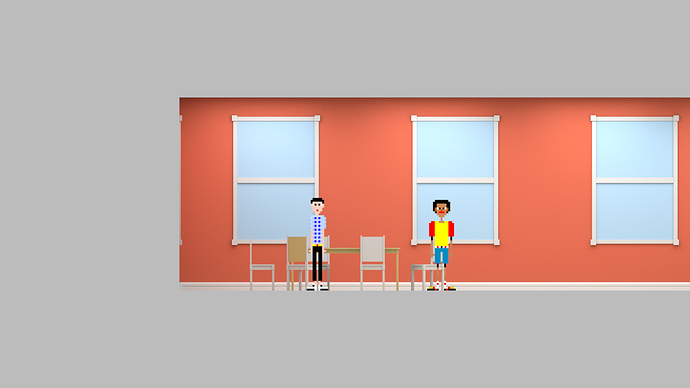

So I have a 32 x 32 png. I want to read each pixel and make a cube out of everything that is not transparent in the image. I also want to make sure that each cube is the same diffuse color as the pixel.

How can I get this up and running? Any help is greatly appreciated. Last night, I did an image manually and it took me 2 hours to do what I did with the pixels in 2 minutes?