What is this?

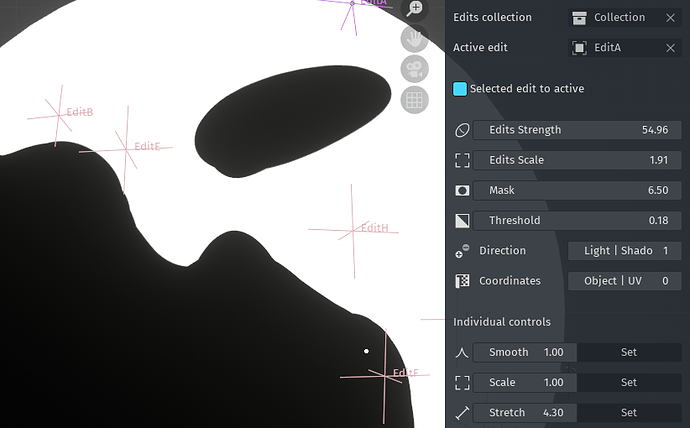

An add-on/artistic system for Blender aiming to revolutionize the cel-shading/hand-drawn animation workflow. It’s partially based on a paper, which you can read about below. I’ve used the paper for conceptual reference, however, my implementation is entirely unique and separate from the authors’ methodology.

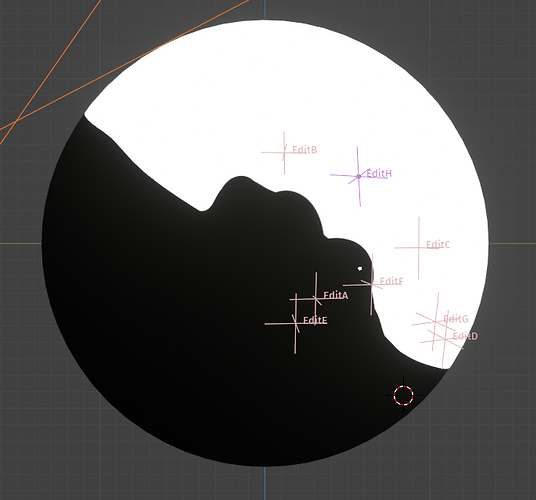

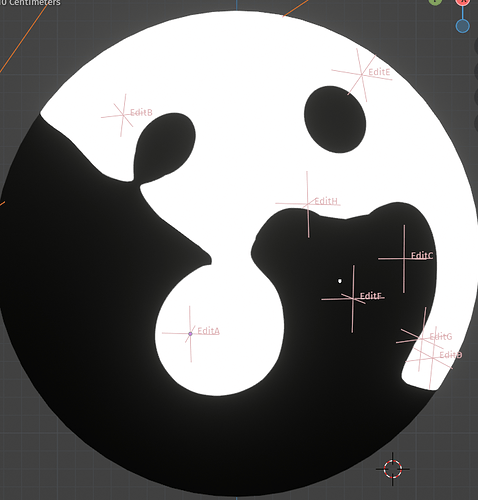

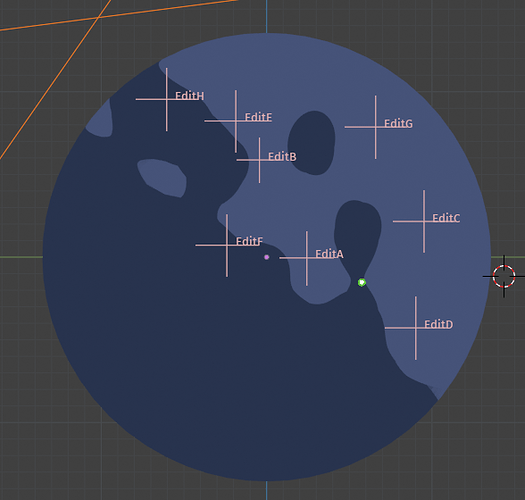

Currently, this has the “artistically directed edits” functionality of the paper. The 1.0.0 Public Release version will have a much larger array of functionality, including camera shape edits inspired by Austin Hardwicke, dynamic shadow boundary variation based on my own past work, and more.

Introduction / Abstracts

At Real-Time Live! SIGGRAPH 2021, Lohit Petikam, Ken Anjyo, and Taehyun Rhee introduced a new shading system for “stylized shading for 3D characters”, specifically for cel-shading.

You can find details about their work here:

And their paper is here:

Abstracts

An excerpt from the abstract is as follows:

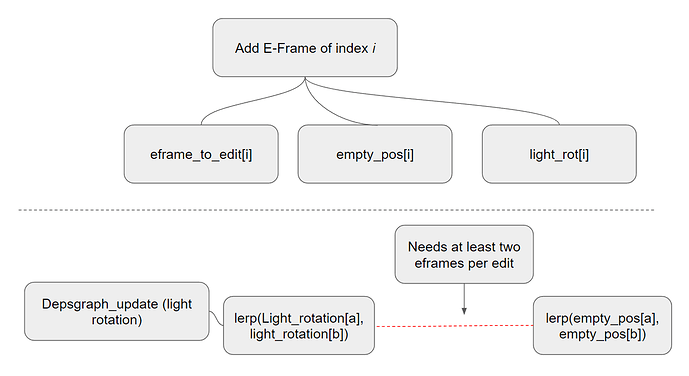

In our framework, artists build a “shading rig,” a collection of these edits, that allows artists to animate toon shading. Artists pre-animate the shading rig under changing lighting, to dynamically preserve artistic intent in a live application, without manual intervention. We show our method preserves continuous motion and shape interpolation, with fewer keyframes than previous work.

A more comprehensive excerpt can be found on the above website, and is as follows:

Toon shading often gives poor results on 3D characters. Rotoscoped shadows used in film aren’t real-time for games. Current toon shaded games use painted/textured/baked shadows that don’t change with lighting.

Shading Rig is a new workflow to animate artist-defined shading with lighting changes, and preserve art-direction in real-time toon shaded games.

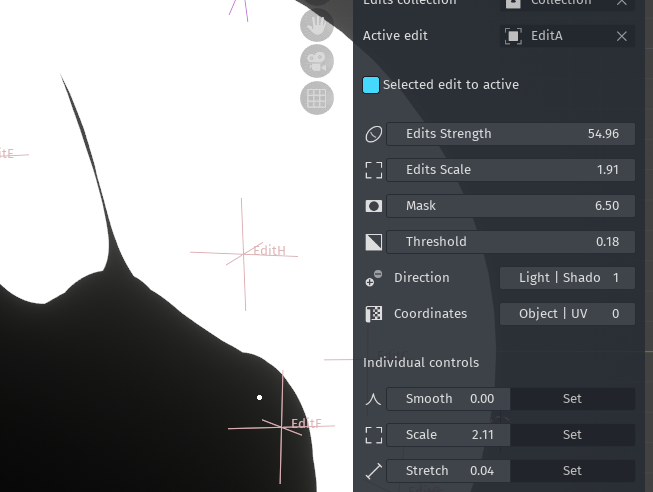

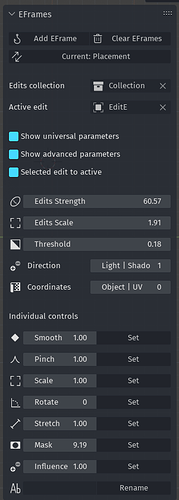

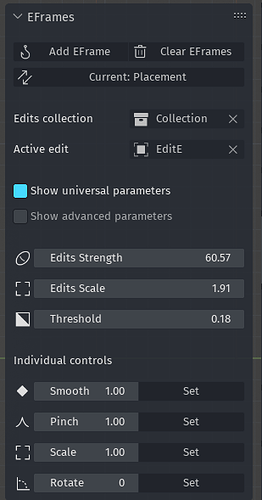

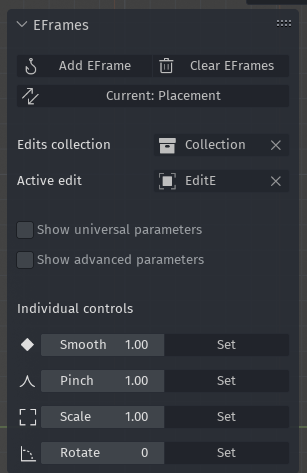

We achieve this with a “rig” of shadow editing primitives designed based on fundamental artistic shading principles. These primitives can be animated to achieve highly stylised shading under dynamic lighting.

Advantages

Summary

This system is leaps and bounds ahead of anything else in the cel-shading 3D world. Current normal editing techniques can approximate the results of this work, but cannot duplicate it. I’ve been following the cel-shading 3D character art community with great interest for years. I’ve exhaustively researched every available method, current, defunct, and prospective, that can be found on the Internet, and I believe that this approach has the most accurate to a hand-drawn look of any other approach.

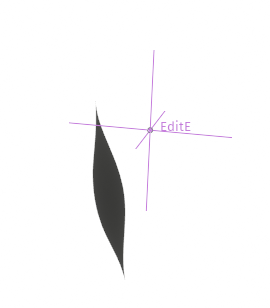

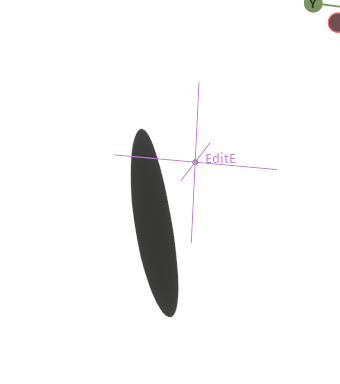

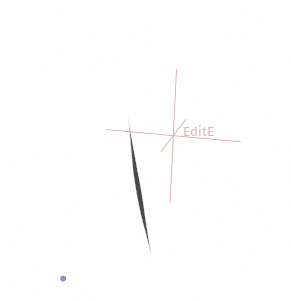

Vectorization

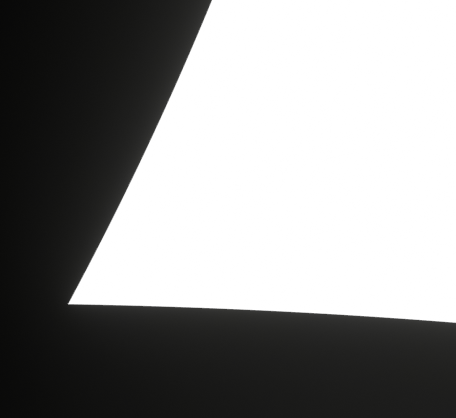

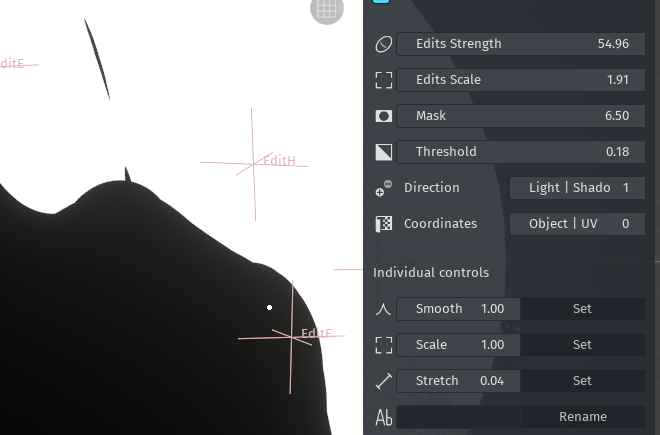

One huge advantage of this system is that it’s fully vectorized by default, which gives extremely sharp lines at any resolution:

While it is currently possible to get vectorized shadow boundaries, it's quite difficult with most implementations. Options like ILM mapping, while effective, require intensely precise UV mapping for vectorized lines. This method requires no UV mapping whatsoever.Austin Hardwicke’s facial blending technique

Details

One huge improvement comes from using shape-keys to create facial variations relative to camera angle. Austin Hardwicke has recently brought this technique to the forefront of the discussion with his work on the main character in animated film Belle. You can see an example of his work here: https://twitter.com/chompotron/status/1481553948721180677

Where do I come in?

View

There is currently no publically available implementation of Petikam et al.'s work. While their paper details their methodology and mathematical work, there’s no existing implementation of this into 3D software. As a Blender user, I’m most interested in this as it relates to Blender.

I reached out to Petikam a few months ago asking about his plans for releasing a Blender implementation. He said he would maybe do it someday, but didn’t give much definite indication. A follow-up email/reply received no response. After a few months, I believe this project is officially dead, leaving it to community members to interpret the research and implement it themselves. I’ve decided to take on this challenge myself.

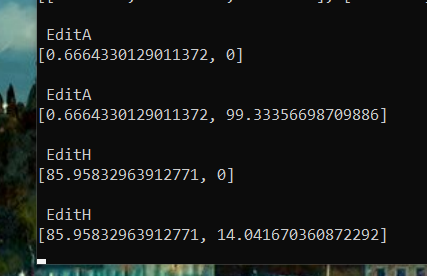

Technical Details

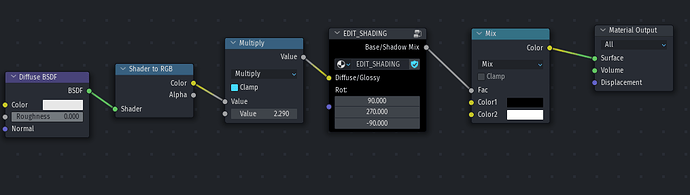

When my implementation is finished, it will most likely be bundled into a discreet script. Much of what needs to be done has to be scripted, and the parts that don’t (shader nodes) can also be done with a script.