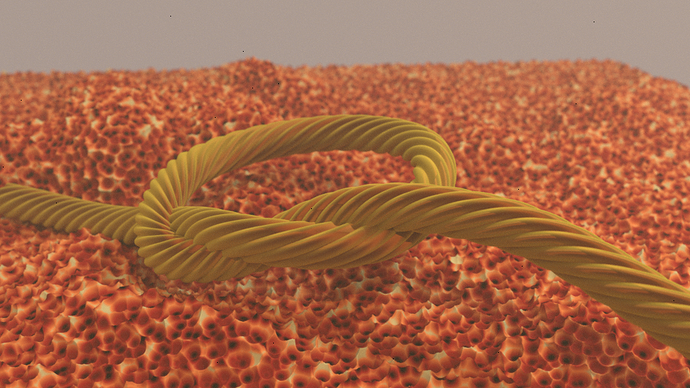

Another small bit of messing around. It doesn’t quite work right on paths unfortunately. (At least I couldn’t get it to behave, either barely any displacement or it just wouldn’t render.) However converting a path object to mesh still allows it to be usable to some extent. (The rope in that render.) Other than that, just some other messing with it in the background… Some of the other stuff in the previous pages makes me think this will be rather neat for making a coral reef scene. A bit beyond the scope of what I can figure out for a quicky test though.

This is also possible without microdisplacement, but man, this is really saving me some hardware.

I love it!

Could anyone else try to change the camera to panoramic when they render? It seems like microdisplacement is broken with a panoramic-type camera…

edit:

or, not entirely broken, but it seems to throw it off as to how much to subdivide and where… I’m not sure here, but something is really off in the least…

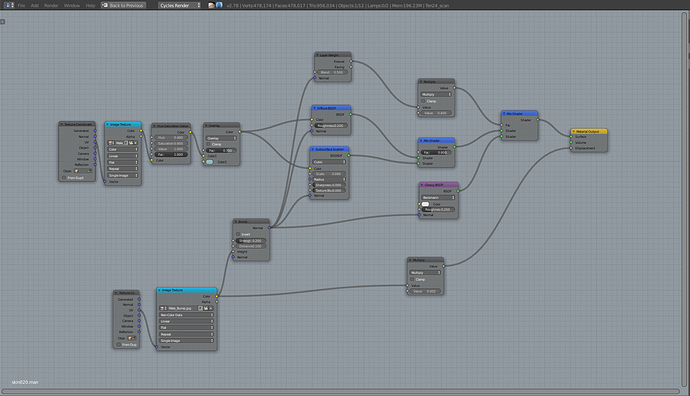

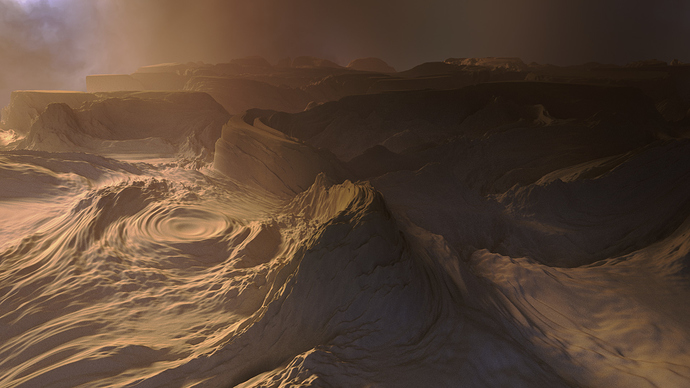

I had a lot of fun playing with the new microdisplacement + bump features with this scan by http://www.3dscanstore.com

and the simple shading setup:

I used Affinity photo for post adjustments.

R

I was about to ask the same thing. Currently I have a large displaced plane as a floor and Blender is eating memory like crazy.

If I add a wireframe shader to look at the tessellation of the plane I can see that of course even the plane behind the camera is densely tessellated as well.

Other renderers I know (Redshift, Modo, Vray, …) offer you to multiply the out-of-frustrum dicing rate by a factor of your choice (like e.g. 4) which helps a lot and still gives you plausible reflections and shadows.

I hope there are plans to implement this in Blender as well.

P.S.: I’d also welcome the possibility to be able to choose the preview dicing rate manually. Sometimes the hardcoded default of a factor of 8 is just too much.

1:8 is set into Render>Geometry panel. You can change it as you like anytime

You could also use a mask modifier to hide meshes/vertex groups you don´t want to tessellate

or

use a camera based occlusion culling method described on page 18 in this thread.

Oh, wow! How could I not see that in the first place! Thanks!!! ![]()

Thanks! I will try this soon! ![]()

I’m sure there’s a good rationale for that if you ever decide to have reflections in your scene.

But in other cases, I see your point (and I think the best way to deal with this is Mai adding a couple of new features and/or tweaks to the tessellation process), options might be…

- The falloff in tessellation density becomes tighter for parts of a mesh behind the camera and outside of its frustum

- The subsurf modifier gives the user the ability to control the tessellation density with a vertex group (with OpenSubDiv activated)

Anyway, there’s a reason why it’s still under the experimental set, so it’s likely that more work will be done on it (and the two ideas above may become nullified anyway if the adaptive cache feature gets in).

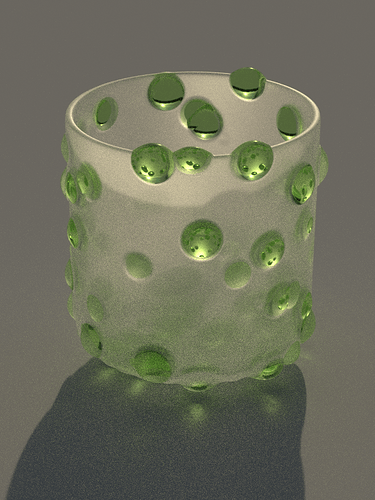

Using a Vornoi texture and colour ramps to drive the displacement and mix between smooth and rough glass.

WOW

I WANT this as a wallpaper. Can you share your material setup?

Hi!

Is there any plan about when the adaptive subdivision feature will be supported in cycles panoramic camera render?

Thanks!

It is strange to have cycles viewport realy fast rendering adaptive displacement. I have no Border rendering and tried fullscreen preview. No problems and realy fast. ( preview and render settings are the same and preview looks like expected! ) When I hit F12 It takes forever. Also when I use CPU. On GPU blender reports the usual “Out of memory”.

Does anybody have an idea why?

I mean it is realy fast, looks good in full screen preview but why doesn`t it render? Maybe because it is still experimental…thats sad.

Thanks !

@Piet:

There are two different dicing rates for preview and render. Are you sure they are the same?

You can change them under “Render->Geometry->Subdivision Rate”.

Seems no one mentioned it but it is important to note that particle instances, dupliverts and dupli groups don’t get tessellated separately. Instead, the original object gets tesselated and the result is duplicated on all instances, which deflects the purpose of adaptive subdivision.

You can’t have both the benefits of instancing and adaptive subdivision at the same time. You can still “make duplis real” to turn a particle system into a mesh and adaptively subdivide that.