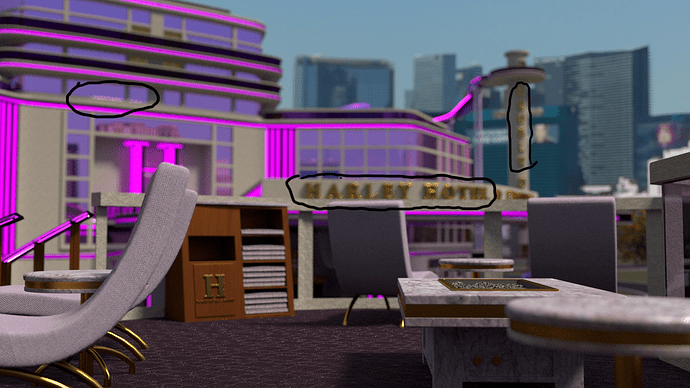

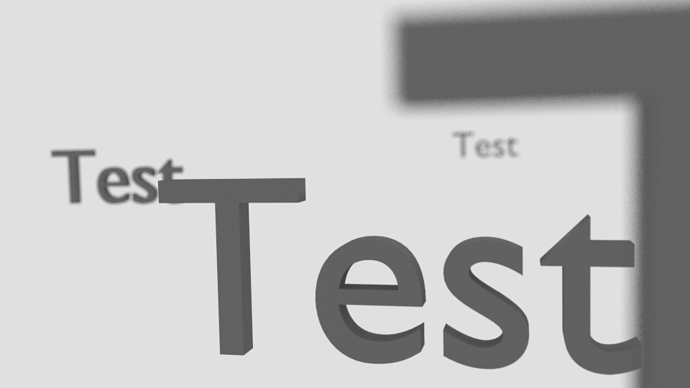

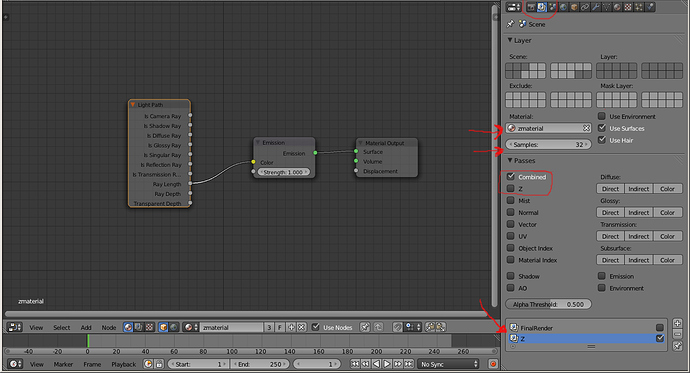

I’ve been trying to do some renders with some DOF. I’m using 2.71 official. I’m setting up an empty where I want the focus to be and then adjusting the radius. It’s difficult because these are huge scenes and can only guess what it is really going to look like because of the render time involved. But each time I have made a render, I am getting strange artifacts somewhere appearing in various places. Most times I can do some spot healing in Photoshop and get rid of them.

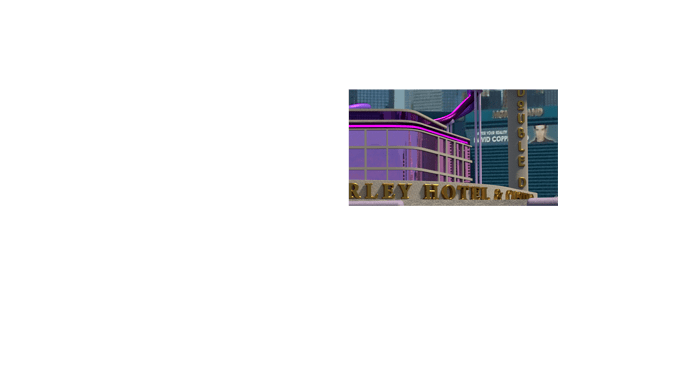

Please click on the image to see full resolution. This is a bad example because most people are going to look at it and say the gold isn’t converging enough, but I have had these artifacts in many places where there is no gold. Also when using no DOF I don’t have the strangeness that is occuring with the gold in this image.

A good example on this image is what is circled in the upper left…

Here are my questions…

-

Has anyone else experienced DOF artifact problems with Blender 2.71 official?

-

I really don’t have any idea on ,when or if, I should be changing settings on the Blades and rotation settings?

Any thoughts will be appreciated. I’m going to do a different render and I’ll post that if there are any artifacts.