Indeed. The only problem is that I learn more and more how little of a clue I have of color grading and how complex of a topic it seems to be.

It doesn’t help that googling for information usually leads to vast swamps of tutorials that tell you that moving gain and lift to orange and teal is the secret of color grading but offer little to no further information.

And I’m too stupid to understand Troys technical explanations.

At the end of the day the only thing that matters is – how does it look? Are you and your client happy?

It’s far too easy to get drawn into these types of academic discussions that can feel overwhelming and convoluted, but we shouldn’t forget the end goal – how does it look?

ACES was introduced barely a decade ago, and it didn’t really start being heavily adopted in post CG/VFX pipelines until very recently. Does that mean that movies and TV shows done before then looked like crap? I don’t think so.

Being able to convert RED and ARRI footage into a post-friendly color/gamma space isn’t something new, as I’m sure many of the people involved in this conversation can remember. ACES does provide a common “lingua franca” that aims to make the pipeline less user-error prone and versatile, but it’s not the only way to get to a final product.

I don’t really know much about the science behind Filmic, aside from using it within Blender. Robert keeps bringing up the shortcomings of Blender within the larger context of studio work, but I would remind him that most studios still look at Blender with more than a healthy dose of skepticism and distrust.

Hmm…

Getting this sort of stuff done makes the client happy.

But making the client happy is only a means to an end, a way to make money.

And making money is just a means to an end, so that I have enough time which I can throw at understanding overwhelmingly convoluted scientific discussions. ![]()

![]()

I think it’s safe to say that, aside from Robert, most of us working in Blender aren’t interfacing with large studio pipelines. Our workflow is typically more customized to our own specific needs.

My main concern is to be able to get the most amount of information out of my renders (this goes for Blender, Houdini, etc.). Switching to EXR from PNG or (gasp) mp4 is the single best decision that any CG artist can make to get the most out of their render.

And that’s why I try to promote Blender as a viable tool for serious VFX work on my YouTube Channel. When I was working at Method (yeah, name dropping ![]() ), I asked to get Blender installed on my machine. The answer? What’s Blender? Turns out the Vancouver office was already using it so I’ve been able to get it. Blender is way better than Maya in many ways. Sure, we are far from Houdini for VFX. But still, a lot can be done with Blender but if what comes out of Blender in rendering gives you a headache in comp, it’s pointless. Blender adopted several industry standards like EXR, VDBs, Alembic, USD. That shows that they want to fit in. If Blender wants to play with the big boys, ACES is the next step.

), I asked to get Blender installed on my machine. The answer? What’s Blender? Turns out the Vancouver office was already using it so I’ve been able to get it. Blender is way better than Maya in many ways. Sure, we are far from Houdini for VFX. But still, a lot can be done with Blender but if what comes out of Blender in rendering gives you a headache in comp, it’s pointless. Blender adopted several industry standards like EXR, VDBs, Alembic, USD. That shows that they want to fit in. If Blender wants to play with the big boys, ACES is the next step.

There’s a reason why all these movies by (some) major studios were done in ACES.

Absolutely. I got away with so much shit color-wise in the past, I’m almost ashamed. My recent interest with color management is because my next film will likely get a couple cinema and TV screenings in addition to the classic Youtube publication (nothing Hollywood, it’ll stay very much local), so I’m aiming to make it look good everywhere -and I don’t really have enough funds to pay for comp and grading so I decided to do it myself. I used to be confined to the realm of rigging&animation and oh man, there’s so much stuff I have no clue about on this side of the job.

I don’t want to be disagreeable here, but Troy doesn’t help much anyone who doesn’t already understand the issue. It’s great we have someone around with such acute understanding of the field, but I really wish his posts were a little more accessible. Troy, if you’re reading this. Thanks for the outstanding contribution to the conversation in any case.

I can get behind this. God creates dinosaurs, dinosaurs create filmic…

I will counter your splashy link with another splashy link! Here’s one for Fusion with a bunch of equally impressive movie posters that makes it look like everyone in Hollywood uses it (they don’t):

https://www.blackmagicdesign.com/products/fusion/

I get your point, and fundamentally I don’t disagree with it. I just wanted to add a different perspective since there does seem to be quite a lot of mis-understanding of how pervasive ACES is in the studio pipeline from editing (not much) to CG/VFX (very much) to color grading (not as much as the CG folks seem to think) to final release.

Regarding your enthusiasm for Blender, I love your tutorials (I like your humor, and I get the feeling that if you lived in LA or I lived in Vancouver we would probably hang out in person and trade cookie recipes), but I don’t necessarily share the drive that I see displayed in these forums to get Blender widely accepted in the studio system.

Personally I kinda like the fact that Blender is geared as a freelancer/amateur tool (note. I’m using the word “amateur” for its actual meaning and not as a put down) and I am somewhat happy that it’s choosing to play by its own rules (unfortunately some annoying like Z-up)

As you might or might not know by now, I’m mostly a Houdini user who is slowly moving to Blender (for some stuff). In the SideFX world, I am constantly reminded that as a freelancer my voice isn’t heard nearly as loudly as if I was TD for a large VFX outfit. I find many of their development decisions somewhat less than exciting for my own personal needs (for instance I would prefer a more powerful viewport experience like EEVEE, and instead I get a confusing and still clunky implementation of USD).

With Blender instead it’s the complete opposite. For instance they’re working on full support of Apple Metal which for me is much more enticing than SideFX’s new semi-GPU and Nvidia exclusive Karma render engine. They’re also beefing up EEVEE with ray tracing which IMHO could be a game-changer for many.

The point is that, I do agree on the importance of the ACES implementation in Blender (it already has that). But I would also remind many Blender users who have been doing just fine without it, that it might not be 100% necessary for their own workflows.

There is a lot of hype around ACES at the moment, and I think it’s more important than ever to have a well rounded conversation that tries to separate the hype from actual real-world needs.

I get it…been in the same boat which is how I got reasonably good at several things in the post process.

The best advice I can give you is to subscribe (for at least a few months) to this and watch as many tutorials as you can…trust me it is worth every penny:

Hello Blender Bob,

nice to meet you. I am sorry to land like this in this thread but I can assure 100% that these movies were not done in ACES. I cannot confirm for every single of them of course, but hopefully you get the idea of what I am trying to say.

I used to think so and I has actually linked this list on my website but this list is completely misleading. In best case scenario, this link lists the movies that have been delivered/archived with ACES. But that´s all, they do not use the ACES Output Transform for instance.

This has been confirmed several times during the ACES Output Transforms Virtual Working Group of last year (the VWG is not finished yet). And some Technical Advisory Council (TAC) meetings as well.

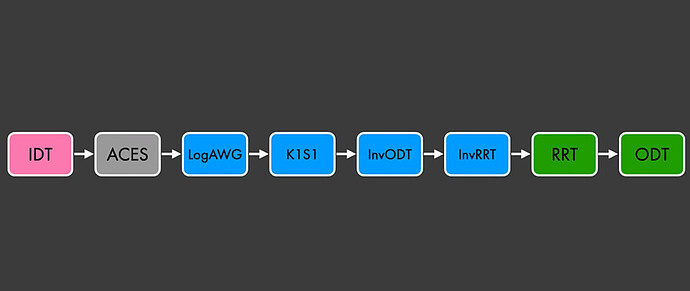

Here is the actual workflow used on most of these movies :

You have an IDT that brings your footage to ACES/ACEScg working space, then convert to ARRI Wide Gamut and finally Display with K1S1. The four last blocks on the left (InvODT-InvRRT-RRT-ODT) are what we call a no-operation. They cancel each other out.

I know that through my website I have unfortunately participated in this ACES trend but I have done my mea culpa here : https://chrisbrejon.com/articles/ocio-display-transforms-and-misconceptions/

I had the chance to talk with a few colorists/color scientists working in Hollywod. Their advice is generally the same : do not use the ACES Output Transforms.

Hope you enjoy the read,

Chris

False.

Sorry, they are using it exactly as @Midphase suggested; an interchange.

They were not “Using ACES”.

Believe me when I say I’ve failed you if you don’t understand something.

Sometimes when tackling the deluge of misinformation, you’ll find folks repeat falsehoods, and the explanation wanders away from the general audience I’d prefer to speak directly to; folks like you.

I’ve also tried to lay out enough threads of groundwork so that these sorts of confusions can at least be refuted by knowledgeable folks, be it experienced solo image makers, or others.

Appreciate that I’m not airdropping in here randomly, with zero effort sunk into trying to help folks. Also appreciate that there are reams of documents and forum posts on this subject. If you are here in Vancouver, I’m also happy to go for a coffee.

I wish that would happen and for it to be a podcast episode. ![]()

Michael,

Thanks for that link!

Ooof… this is getting insane.

Hey, guys, if you are happy with filmic, then enjoy filmic. ![]()

I’ll say it one last time. This is our workflow.

We get footage in whatever format the camera does. Let’s say Red Log. We convert to ACES. our renders are also in ACES with the full colo range, not just from 0 to 1. We comp in Nuke, set to, you guessed it, ACES. When we deliver, we output to whatever the client wants. If he wants ACES, he gets ACES. If he wants Rec2020, he gets Rec 2020. If he wants to continue in ACES, we deliver ACES. At the end of everything, at final delivery, after grading, it’s never in ACES (but apparently it’s also used for archival).

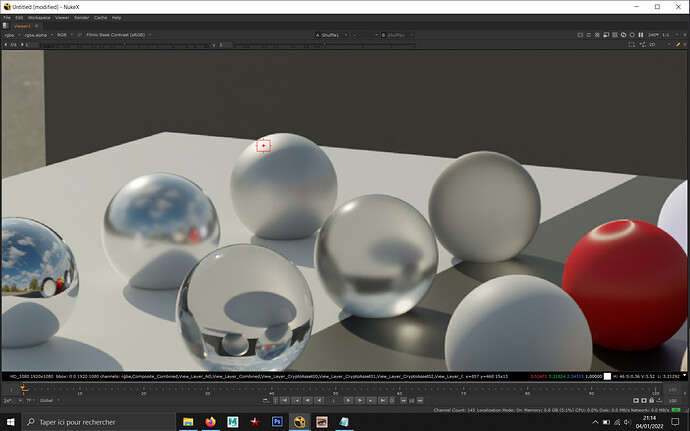

My point was that we can’t use and EXR (forget PNGs! We need multilayers) with the filmic look in Nuke. If we use the OCIO transforms, we will get the filmic look but we will lose the full color range, everything will be set from 0 to 1. If you have a way, please tell me!

If you guys want to experiment, my test files are here. You will find an EXR and a PNG for ref and all you need to setup Nuke with filmic. You also get a Natron file (filmic is native in Natron) and a Resolve project, also setup for filmic.

So if Blender integrates ACES properly instead of the hack we are using, that will be awesome for me.

@Midphase Hey! Nice of you to join in. Resolve supports ACES out of the box. I can’t make it work properly in Fusion but I don’t know Fusion.

That being said, @troy_s Troy, I’m in Montreal but if I was in Vancouver I would be more than happy to grab a bite with you and talk about it. I don’t drink coffee. Or any of you guys actually. But after Covid…

BTY, I’m friendlier in person ![]()

@BlenderBob Hey Robert!

I created an account just to reply to this interesting thread about color pipeline ![]()

It’s pretty easy to get a match between your scene-referred EXR and your display-referred PNG images.

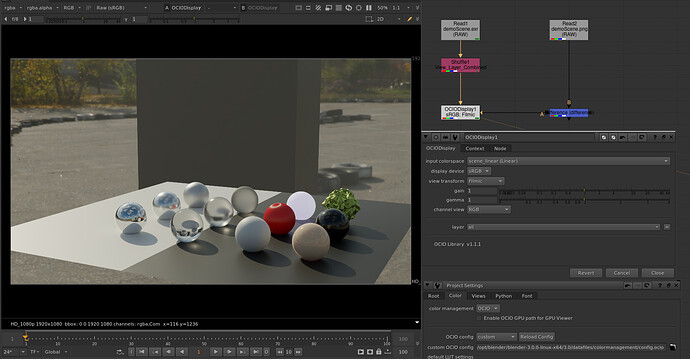

Here’s a screenshot in Nuke and in Natron

EDIT: apparently I can only put one media item and can not use attachments because I’m a new user… so you all only get a screenshot in Nuke, sorry!

I can’t upload the scripts because I’m a new user, but it’s pretty simple.

In Nuke:

- Load both images with no color transforms applied (Raw checkbox)

- Create an OCIODisplay node

- Set your View Transform to Filmic and your display device to sRGB

- Compare with your PNG

Natron is buggy as heck but I got it to work with the same method.

I’m using the Filmic OCIO config provided with Blender 3.0 here.

If you consider the Filmic sRGB view transform as a client show lut, this would be a pretty similar workflow to what we use in feature film visual effects.

Would you have any interest in connecting directly with Blackmagic? I personally think that they need feedback from someone like you, and I’d be happy to make an introduction with their development team if you’d like (and have the time).

Would you have any interest in connecting directly with Blackmagic?

I’d love to but before I talk to them and look like an idiot (it happened before) I would need to learn more about Fusion. For the Resolve part, it’s very easy. For the Fusion part, for some reason, it doesn’t take into account the Resolve settings.

Hi Jed! Can you take and RGB reading on the left sphere highlight? Any reading over 1?

Well…just let me know, I can connect you directly with the engineering team. Also, I sent you a DM on Discord about Ian Hubert’s latest effort which is tangentially related to this conversation (kinda sorta).

Hello,

I just did the test and yes, I have values above 1. And a perfect match with your png.

Jed has given you his solution. Here is mine, which is different. Basically, you need to edit the OCIO Config.

displays:

sRGB:

- !<View> {name: sRGB OETF, colorspace: sRGB OETF}

- !<View> {name: Non-Colour Data, colorspace: Non-Colour Data}

- !<View> {name: Linear Raw, colorspace: Linear}

- !<View> {name: Filmic Log Encoding Base, colorspace: Filmic Log Encoding}

- !<View> {name: Filmic Very High Contrast, colorspace: Filmic Log Encoding, look: Very High Contrast}

- !<View> {name: Filmic High Contrast, colorspace: Filmic Log Encoding, look: High Contrast}

- !<View> {name: Filmic Medium High Contrast, colorspace: Filmic Log Encoding, look: Medium High Contrast}

- !<View> {name: Filmic Very Low Contrast, colorspace: Filmic Log Encoding, look: Very Low Contrast}

- !<View> {name: Filmic Medium Low Contrast, colorspace: Filmic Log Encoding, look: Medium Low Contrast}

- !<View> {name: Filmic Low Contrast, colorspace: Filmic Log Encoding, look: Low Contrast}

- !<View> {name: Filmic Base Contrast, colorspace: Filmic Log Encoding, look: Base Contrast}

active_displays: [sRGB]

active_views: [Filmic Base Contrast, Non-Colour Data]

And you will get a perfect match. When you load the default Filmic OCIO config in Nuke, I agree it is a bit unsettling. So it is very common to edit OCIO Configs to your needs. Here is the screenshot with the values above 1.

I hope it helps a bit,

Chris