We already have a rudimentary distance-based point distribution algorithm (Poisson disk) which can minimize intersection to some extent. We will have more control once the field based merge by distance node is implemented.

Level sets (OpenVDB volume nodes) test

The volume nodes are not in master but only in branch builds “geometry-nodes-level-set-nodes”

I swear these people act like they pay a monthly fee to use Blender.

When will this node be in master/beta/alpha?

From what I heard not before 3.2.

To quote from the 3.1 targets topic-

Level sets:

- Design still needs to be worked out, mainly T91668: How to deal with multiple volume grids in a geometry

- Generally procedural modelling with meshes has higher priority.

Hello everyone. Any idea how I can solve this?

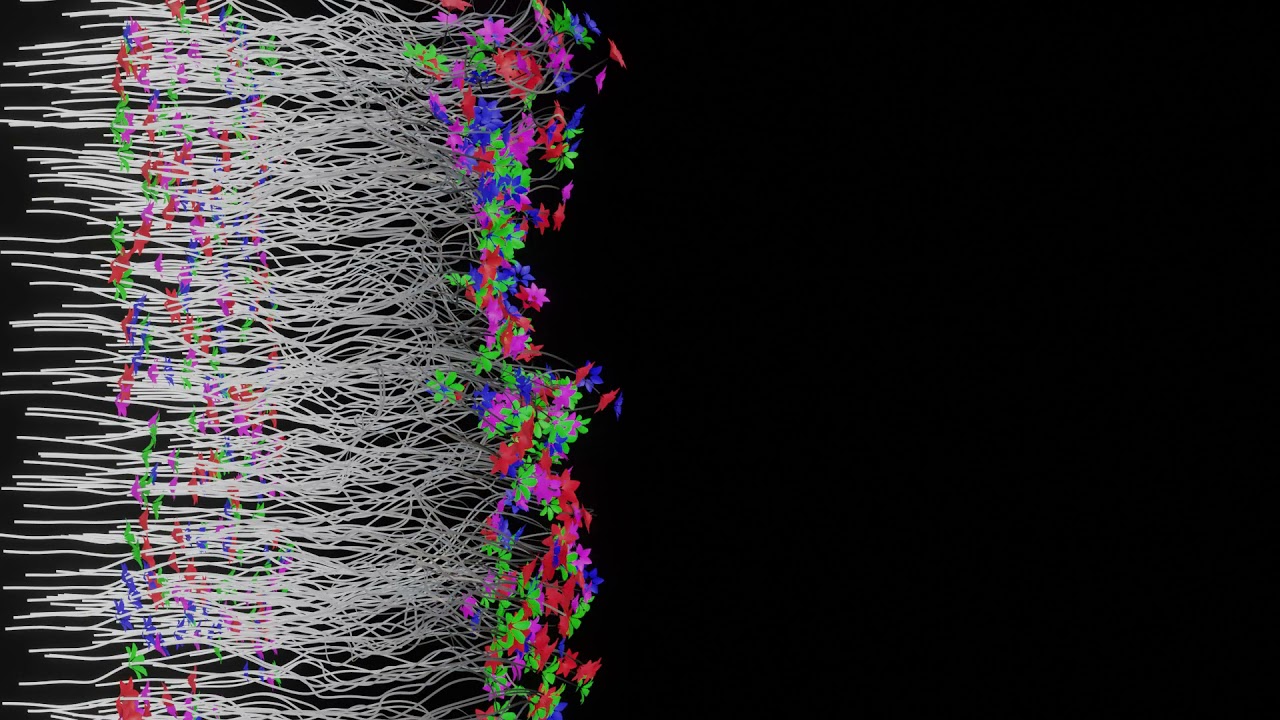

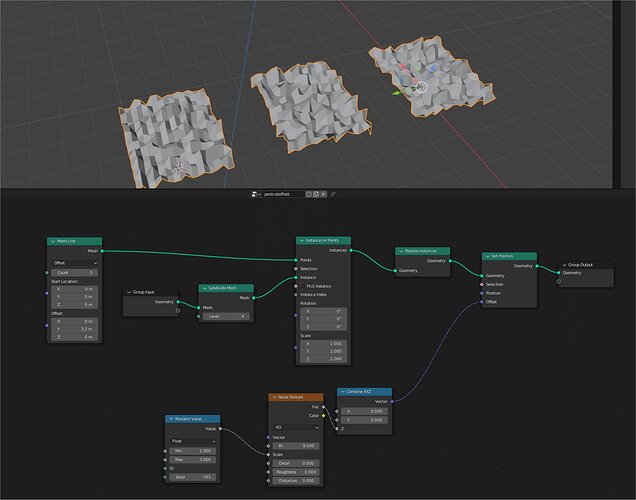

So I have this simple setup as seen in the video. Points are distributed over a surface and geometry is instanced on the points. Then I’m using a translate instance to move the instanced geometry around. I’ll also like to offset the vertex position using a texture but to do that I’ll have to first realise the instances. However, the translate instance sort of makes the geometry “swim” in space if I use this setup. I want the texture displacement to be fixed or local to the geometry. To fix this I subtracted the translation amount from the position before passing that as the vector of the texture and that works but only for the particular direction (upwards in the video)

What I’ll like is for the position offset to remain fixed regardless of the direction I’m translating the instance on.

Hi Astronet,

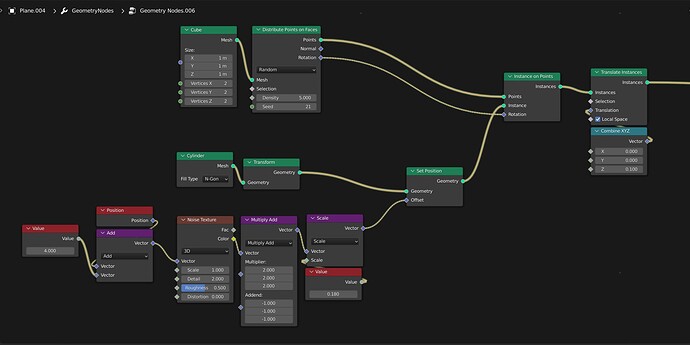

For the displacement of your instances to remain “still” or to not move at all regardless of the direction that you want to move your instances around, you’d need to place the “Noise Texture” right behind the “Cylinder” node before you plug it in the “Instance on Points” node. That way, you can freely move your instances around without worrying about the translation affecting the overall displacement of your instances.

//Here’s the set-up.

EDIT: I’d realized that there was a drawback with this approach: If you placed the “Noise Texture” node right after the “Cylinder” node, the displacement on the instances would all be the same. So, in case you’d want to have different variants on the displacement of your instances, the below set-up should be able to do the trick.

Thanks for the help.

Just that the problem with this is every cylinder instanced will have the exact same deformation as the first since the deformation was applied before instancing. what I’m trying to get is the exact same effect you have now but with each instance having its own unique deformation. does that make sense?

Edit: Oh wait I just saw your edit. I’m checking it out now thanks

Hi @CubicSpaceMonkN , I finally had time to test your second implementation. it doesn’t seem to do or change the default behaviour I was trying to prevent in the first place. The cylinders still “swim” through space when the translate instances node is adjusted.

I noticed after transferring the normals, you multiplied the outcome by 0 before adding it to the texture. From what I understand that cancels out everything you did in the first place.

I’m of the opinion some maths needs to be done on the captured normals and position input and then that outcome is applied to the coordinate vector of the noise texture instead of adding the normals to the texture itself.

Anyway, I’m going to experiment with this idea and see if I can get it to work. thanks again

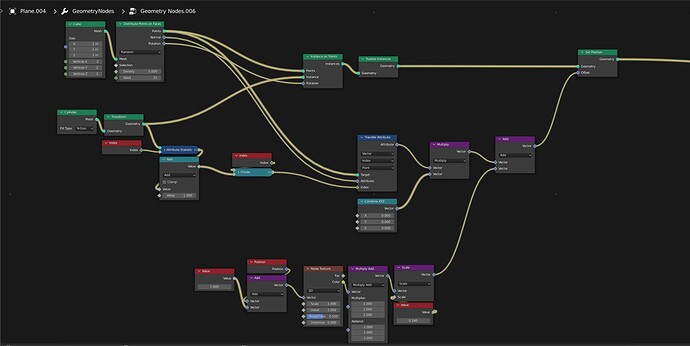

Probably easiest solution would be just realize instances before translating it, and transfer position attribute

No problem, you might want to take a look at @higgsas’s approach and try to see if it works for you or not, I think that his approach it’s a lot simpler than mine and probably suits your need.

Oh, I should have added some explanation for this part, my bad. The part where after the normals are being transferred, the outcomes aren’t supposed to be multiplied with 0 but they’re supposed to be multiplied with some values other than 0 (these values are the amount of offset/translation that you want to apply on your instances).

Thank you so much! this is much easier to understand

Ah, it works now too!

I misunderstood your node and went ahead to add a translate instance node not realising the set position node is handling both the translation and displacement. So the translate node kinda undid everything.

Now that I understand your implementation I might stick with it instead of @higgsas own because I feel it’s more computationally expensive to use two realize instance nodes instead of one.

Finding the solution to this problem has been an interesting challenge haha!  . Because the solution I was working on would create a unique texture coordinate for each cylinder and this works with still just one noise texture because textures are fields!

. Because the solution I was working on would create a unique texture coordinate for each cylinder and this works with still just one noise texture because textures are fields!  . I had no idea the fields concept had this far-reaching consequence. this is definitely an Idea I will experiment with later in the future.

. I had no idea the fields concept had this far-reaching consequence. this is definitely an Idea I will experiment with later in the future.

Thanks again for the help

Ok at this point I’m asking this question just because I’m curious ![]() . I already have a working solution to the problem based on the previous replies.

. I already have a working solution to the problem based on the previous replies.

However, in the attached video, I have a slightly different setup where I am translating the instance only on their local Z axis and it works. The transferred normals of the instance are in the same direction as the local Z-axis of the instances. So I can multiply these normals with the magnitude of the z-axis translation and subtract the resulting vector from the position input. this is then used as the coordinate vector of the texture node. The same Z-axis translation is applied to the Translate Instance node as well and this creates the same “fixed” displacement as in the other method but only in the local Z axis.

My question is is it possible to do the same with the X and Y axis as well instead of just the Z-axis? The z-axis worked because the normals point in the same direction. Can I generate like a tangent or some vector that points in the local X and Y axis as well from maybe the rotation output of the Distribute points on faces node?

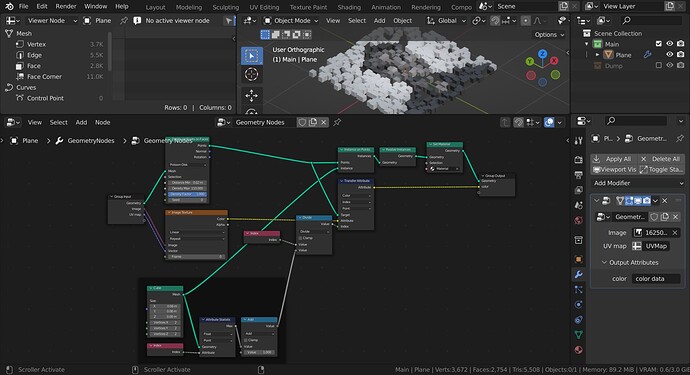

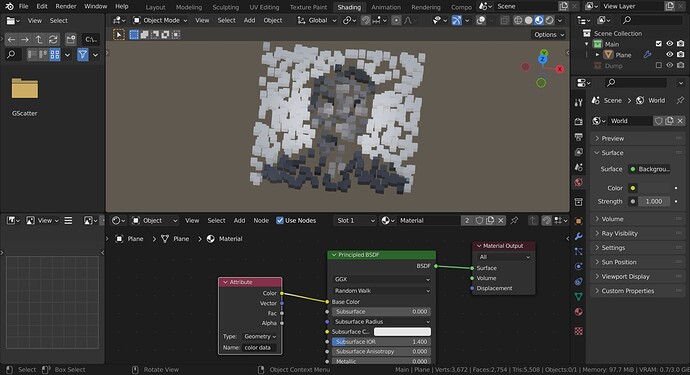

hey hey! Is it possible in geo nodes to use color info from a bitmap texture?

I would like to instance some cubes on a mesh and use as color information for those cubes a texture, and even better using the uv coordinates of the original objects.

Can I do this in geo nodes with the current feature set?

Thank you!

Yes, you can do that. Here’s a blend file for that.

transfer data.blend (989.4 KB)

I simply distributed points on a surface and instanced cubes on the points. To capture the texture data and transfer that to the cubes you just need to use a transfer attribute node before the instance on points and connect that to the output. In the material editor, you can use an attribute node to access the geo nodes output as long as you type in the correct name from the modifier.

I’m quite in a rush right now so I don’t think I explained the setup properly. However, if you need anything clarified just ask and I’ll try my best to explain.

hope this helps.

And… Another question

I already posted this in the dev talk forum but I noticed I get a lot more responses here… so yeah sorry if you’re seeing this question twice.

I’m trying to understand if the sharability paradigm imposed on the fields workflow is the same concept I have In mind of what it means for a node group to be sharable.

So imagine I have two geo nodes modifiers on two different objects. Object A has a “generation” node group that generates some random geometry by simply changing a seed input. Object B has a “distribution” node group that distributes any object over its surface. Is it possible to distribute obj A over obj B such that obj B has access and writes to the seed parameter of obj A for every instance of obj A that is distributed? This way the instances are all uniquely generated based on obj A geo nodes modifier.

Is this possible currently or am I missing something.? I know I can easily do all this in one node group but it will be pretty neat to abstract complex set up into node groups on different objects. Or heck I don’t mind if this is doable on different geo nodes modifier on the same object. I just don’t know how to go about it yet so any help or advice is very much appreciated. Thanks

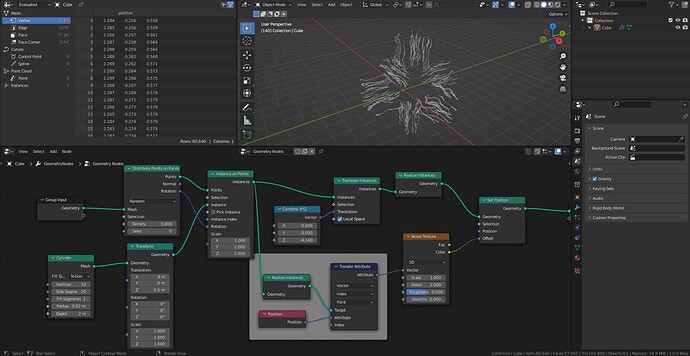

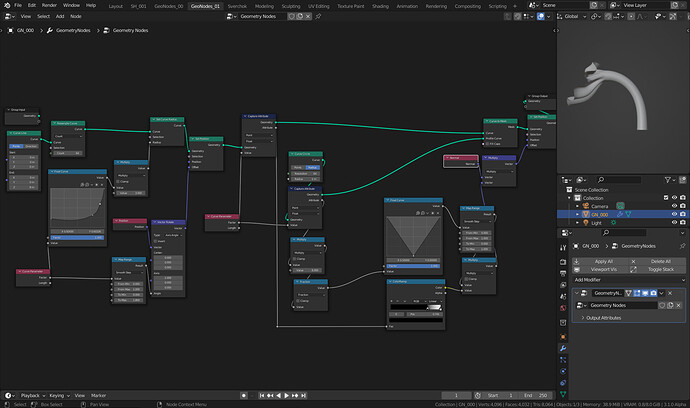

Hi, I have n instances of a plane with a texture displacement. I want for each instance another (randomly generated) scale value for the texture (between 1-3). Here obviously the random generator creates a new scale value not for each instance but for each vertex (which looks ugly). Whatever I try, with “realize instances” or not, “set position” before or after the “instance on points”, trying with “transfer attribute” to get only IDs for each instance, I cannot come to the desired result. Is it posible at all?

The key is to make sure each copy of the array has a unique ID after you realise the instance and you can do that by using the transfer attribute node. then you can connect that ID to the random value node.

The maths in the index of the transfer attribute node is just to map the index of each point after realising instance to the index of the mesh line before you instanced by dividing it by the total vertices of the instance geometry

texture disp rand scale.blend (818.5 KB)