I see. Totaly missed that point instance node. Thanx for the explanation.

The Join Node

https://developer.blender.org/rB75a92d451ea24ff6f8174f26163b08088122ed3e

Acts just like the “Join Objects” operator in object mode, but it can now be done as a modifier node.

How simple but effective and important this node is. I wished and hoped for a simple join-modifier all the time. Now with Geometry Nodes it finally comes true.

As a Houdini user I’m looking forward to Geometry Nodes in Blender and the progress looks like it’s going in the right direction.

Only thing that kind of bothers me (as in “I haven’t quite understood why yet”) is why one has to wire that “Geometry” in- and outputs through all the nodes. They’re already dealing with geometry so what purpose does this serve? I know it’s not Houdini, but I don’t have to tell each SOP node which geometry it’s working on, because well… it’s already assigned to a certain geometry.

I’m only guessing: you can make multiple paths with nodes, so you might want the geometry to “split” at a certain node and do 2 different chains of operations, then merge them later in the tree…

Nice guess and thanks for your reply… but that is also possible without telling each node what “Geometry” it is connected to, right? In the end, there’s geometry coming in and going out of that node network, and everything in between adds, changes or deletes this geometry.

Maybe I’m misunderstanding what you say but in geometry nodes you don’t always use the incoming geometry. Sometimes you even leave it disconnected and use another geometry entered via an object info node, that’s the current way of instancing particles over a surface as far as I know, since so far it is done in a point cloud object and you just ignore the point cloud geometry and use another one.

I don’t know, I didn’t try anything yet. That’s why I wrote I was guessing.

I think that you’ll be able to call other objects’ geometry into the nodetree. And anyway, if you modify the main geometry you get new geometry and you may want this to interact with the original one, as if they were two different objects. I.e. a displaced and beveled cube gets a boolean subtract by the original cube itself, and then beveled again.

OK, maybe I was a bit too fast there when I wrote “geometry coming in”. But even if you merge in external geometry, generate new geometry or instance stuff, in the end it doesn’t matter where it came from as it all comes out as geometry inside the object the nodes are on, right?

Yeah, but again this is all possible without the initial input node.

If “Geo” isnt otherwise defined, its always the original Geo that the nodetree lies on.

My guess would be for consistency: You can add inputs to the modifier which then needs a representation in the node editor. So it would have to pop up a new input node at some point anyways. So better to have a generic input from the get go for consistency. Always there.

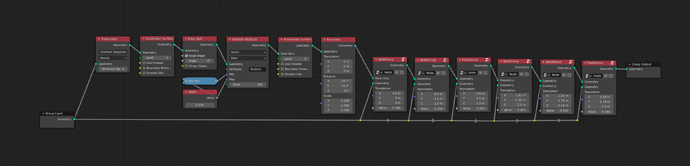

Maybe thats the thing that differs from houdini: How you get the nodetree on a geo. in blender its per modifier how is it in houdini? ![]() global nodetree?

global nodetree?

No, it’s the same in Houdini and Blender. You get into the geometry context and then you fiddle with nodes. I don’t understand the reasoning : of course you have to wire geometry inputs and outputs, that’s how data flow is represented. If you didn’t have those connections showing, how would you know which node flows into which ?

I think SteffenD only meant the input node itself, not all the wiring.

So no modifier in Houdini. ![]()

Oh ok !

Well there are HDAs (Houdini digital assets) which are akin both to Blender’s group nodes, and now to node modifiers (ie building blocks that can be reused). I can only encourage you to go and try it, the apprentice version is free.

To bounce back on this, Blender has a base geometry not represented by nodes (the geometry which you can enter edit mode on), while Houdini doesn’t, and I think this is why you need an input node at all. You can either go full-generative and use only primitives made by nodes (well, not yet you can’t but… I imagine it’s in the works), OR use the base geometry as a starting point, which is what we’ve been doing so far.

On the flipside, I don’t think we should have an output node at all. Ideally I’d like to see a tagging system where we can specify the “final” node to be represented on screen, just like in Houdini. Changing the wiring just to see different effects or different parts of a node tree is gonna get old very quick, just like it is with Cycles.

If you have output nodes, you can have multiple outputs, which can be useful for intermediate graphs. Whereas if you just tag one as final, you can only have one.

There’s nothing stopping you from adding multiple output nodes and just switching the active one, so you don’t have to change wiring each time. This works in Cycles and Eevee too, except that those unfortunately don’t understand plugging a color into the material output as shorthand for an emissive shader, so it’s kind of hampered in its helpfulness for making shaders and that’s we all use Node Wrangler.

Well, it’s not like tagging couldn’t be made to work well, but I just find the way we have now more obvious and explicit.

Ah that makes sense… bit like AOVs ! However, having to create yet another output node and connect it just for the purpose of visualizing the result of the node tree at that point seems overkill, but you’re right about “geometry AOVs”, it’s a pretty good idea. I can imagine pulling this data from another object’s node tree, or use it as coordinates inside a shader…

Oh! So, now I realize that we were always talking only of the first geometry input in the nodetree ![]()

So @rbx775 is right.

I wouldn’t be against ignoring this and let the “modified” object’ geometry feed by default any unconnected input geometry node. More or less as it happens when shading, were image textures nodes take UV by default, while procedurals take “Generated” without having to plug any Texture coordinate node.

So how would you go about setting it to act in the non-default way? What if you just want to disconnect something for a moment to plug in something else? Would you have the graph recalculate based on the default geometry in that case, giving unexpected results and possibly crashing blender from a memory spike? Seems like more trouble than it’s worth considering all this gets you is maybe a second spent adding the input node.