Hello all, and thanks in advance.

Okay, I know this issue has been discussed extensively both in this forum and lots of others, but, generally speaking, the answers I found were very undetailed and/or intended for different workflows/software. So, I thought it would be a good idea posting it here and, even though, in my case, this said issue is pretty blender specific at some level, some additional, more genereal, theorical info. would be much appreciated since there isn´t much out there and it could also help other people with different needs.

My issue is the following: I have an asset (a frying pan) already modelled and properly UV mapped. However, I’m experiencing some serious stretching when trying to work non-destructively with a subsurf modifier. Now, I’ve come up with a fair amount of ideas for workarounds, and only one (the one that´s not ideal) is currently plausible.

These said workarounds were at first:

-To change my model’s topology at the spots that were the most affected by distortion, trying to cleverly add support loops without changing the general shape of the asset (Sounds good, doesn´t work).

-To apply the subsurf modifier and then do my unwrapping, and just deal with the high-res mesh (not a “workaround” at all since the whole non-destructively idea is lost).

-To work on a separate high res mesh with the subsurf modifier applied, uv unwrapped after the fact, do all my texture painting onto said mesh and then work with the low-res one, having checked the “Use subsurf modifier” option for the unwrap command, and the “Subdivide uv’s” option in the subsurf modifier panel.

Of course the one that I previously said was plausible is the second one since it’s not even a solution to my problem.

Now, the thing that I don’t get is why the third one is not possible (or if it is, what I am doing wrong).

To summarise, what I did to check if this could work, was to duplicate my low-res mesh, apply the subsurf modifier, uv unwrap it, and apply a checker texture to it which I then baked onto a texture. After that, prepared my low res,checking the options already mentioned and applied the texture to it. And there was a slight but horrible difference. Maybe all of this was useless since you could come to the same conclussions by comparing the two meshes with standard uv grids (you would see distortion on the low-res one and the high-res one would be fine and dandy). This last thing is what I don´t get. Why isn´t blender getting to the same UV coordinates both ways? Shouldn´t it? I’ve thought about it, and the way that geometry is interpolated when subdivided and the result should be the same in my head. But I’m no expert so maybe someone can correct me. Now, were the case that this is all really pretty but not doable, is there any well-known (or not) workaround for this?

With all that said, I’ll leave some images to help illustrate the problem.

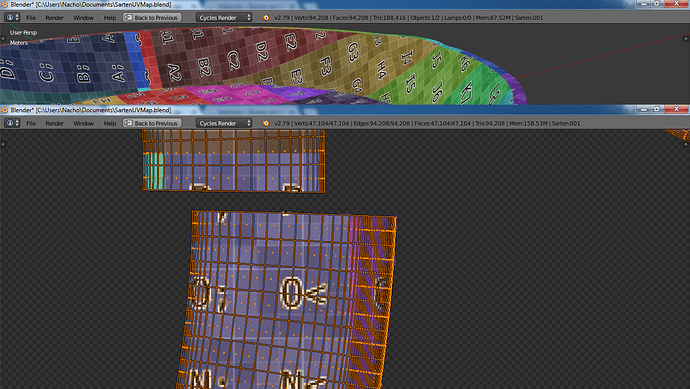

These are the high-res mesh uv coordinates onto the baked grid.

And these are the low-res one’s (you can see there’s a slight difference that ruins the whole thing).

Note that I applied the subsurf modifier so the uv coordinates could be seen properly in the editor, but they were generated previously.

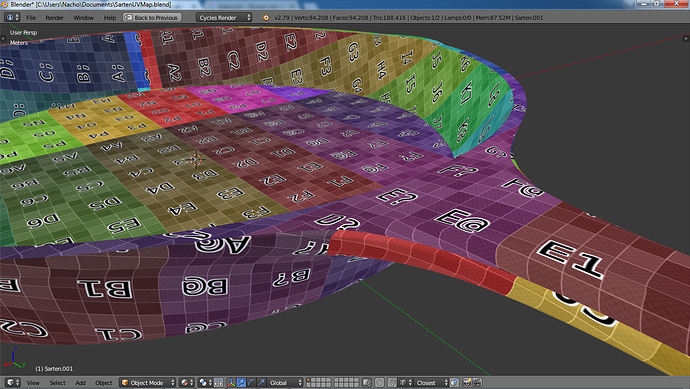

And here is the low res one with a uv grid applied to it to illustrate the degree of the distortion (it´s where the handle starts. It´s not that bad, but for my purposes it sucks)

Thanks a lot to anyone that dares to read the whole thing and respond, and sorry for the length of the thread, but I figured if I wanted detailed answers I should give detailed info.

Cheers!