Great minds… I was pondering this to. Hair and fur tend to work as distinct groups, even hair that has not been styled tends to fall into groups naturally.

Obviously there will be random hairs that extend out of the natural clustering, say for example hair that is combed backward on the head and downward over the shoulders will have hairs at non equal lengths that will stray out of the cluster group, but in the main the hairs naturally follow a curve equally.

My idea, very similar to kakachiex2’s examples would be to create a mesh object, or cloth object, that when rendered would produce curves or particle guides that would then be able to render realistic hair strands. The advantage would be that existing cloth and soft body, etc; dynamics (collision, force, etc.) would be able to be used… The advantage being that these are fairly robust, actively maintained and, with some exceptions, work.

Further thinking brought up additional requirements…

The mesh object must be constrained to only allow extrusion of a “base emitter area” [multiple extrusions can be used] so although you can move and manipulate the mesh you can only increase topology detail by using loop cuts that return to the point of the start of the cut. The reason for this is that the curves are emitted from the plain and must be averaged out along the whole extrusion, to add non uniform additional detail would not allow for easy calculation of where the curves should go in respect of what is the start and what is the end of the mesh. By using extrusion only the tip is always in a logical line.

I guess you could think of it as working like the play-do heads that made hair… for every squeeze you extrude longer hair… in the above for every extrude you are pulling the hair longer, and adding more points along the curve.

I think the main advantage of this type of system is that calculations are done on the mesh, and not on huge numbers of hairs, or hair guides. Self collision and object collision works far better when there is a mesh than with individual strands (or clusters of strands) and also the mechanics are already there for things like dynamic paint to affect the mesh.

If you want finer detail then instead of a mesh extrusion, you would use cloth extrusion. This would allow for a single set of hairs to be generated which would then be able to create the illusion of individual strands. So it would be possible to create the classic effect in final fantasy where she stands on the battle ground and her side (fringe?) blows in the wind. The cloth would be set to silk, or somesuch, and would be one hair (or a pre-set amount) thick… if that makes sense.

Obviously the hair strands generated and following the computed hair guides would allow for all the usual hair adjustments such as random length, frizz, etc.

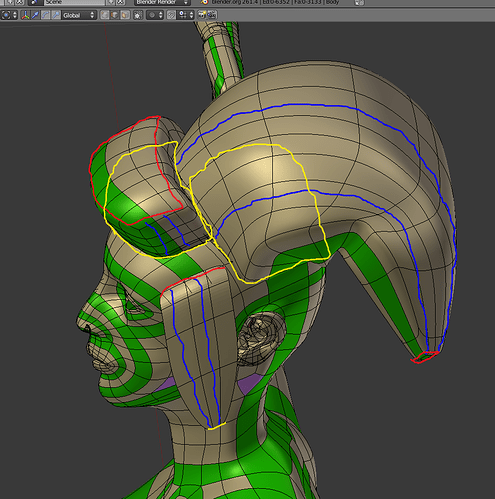

Its been a while since I modeled, but the following pic is a quick example. The yellow pencil denotes the root emitting area, the red the tips, and the blue is a rough guide to direction.

I guess this is very similar to the way smoke/volumetric work. So perhaps a good name for this is volumetric hair.