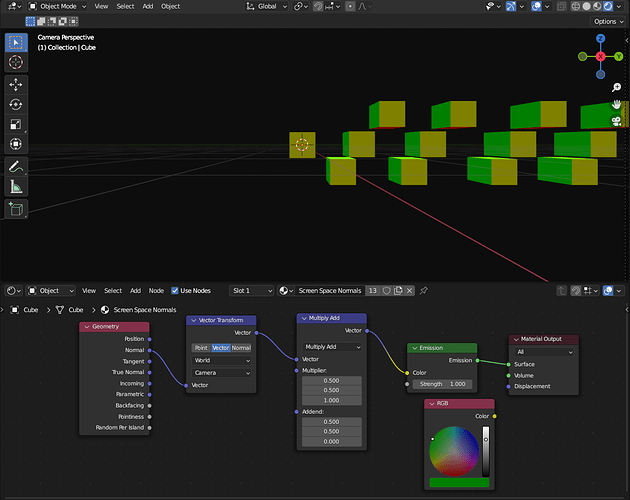

Is there a way to get normals which consider the camera perspective? In the image, I have a couple of cubes and a camera with a focal length of 10mm. The green normals are identical on all cubes, but I would like to consider the perspective as well. Meaning, the green normals on the cube most closely to the right should point more towards the camera. Right now, they are all identical. Unfortunately, there isn’t a transformation I could find which also computes the perspective. Is there something I am missing, or do I have to use OSL for this?

“Incoming” should have the vectors I need for the computation, but I couldn’t figure out how to do it yet.

Good afternoon, @DeepBlender! Assuming you are using Cycles, could you use the Ray Length output of the Light Path node as a factor to mix a different color with your green normal? If I have a moment, I’ll try to mock it up and see if it works.

If you want it to work in Eevee, I’ll have to think some more as the Ray Length output is not supported.

Light Path node page from the manual for your reference:

https://docs.blender.org/manual/en/latest/render/shader_nodes/input/light_path.html#outputs

Thanks for the quick answer

Cycles would be fine too. Just to clarify, the normals with perspective have to be accurate. I am going to have a look at light path.

You’re welcome! Can you tell us more about what you are trying to do and why the normals have to be accurate and maybe that’ll point us to the right answer?

The goal is to create synthetic data for facial landmark detection and I would to try out whether normals for that are helpful. The idea is that the neural network would not only predict the landmarks (or in my case it would be uv coordinates), but also the normals. I already know that this is not going to work with the direct normals, but I need to consider the perspective for this.

Something similar to that:

I couldn’t find a way to do that in a shader, so I will most likely use a script and compute pixel by pixel like that. OSL might work too, but I am too confused by it right now