at the moment global illumination derives only from the procedurally generated equirectangular map using world parameters (ground color, skyColor, fogColor, fogHeight, etc …) soon i’ll add the contribution of the objects in screen space

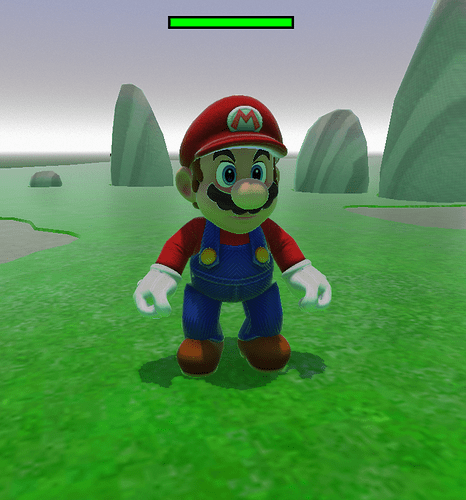

Here the green light from below is evident (grass) but it is dynamic image based diffuse reflection.

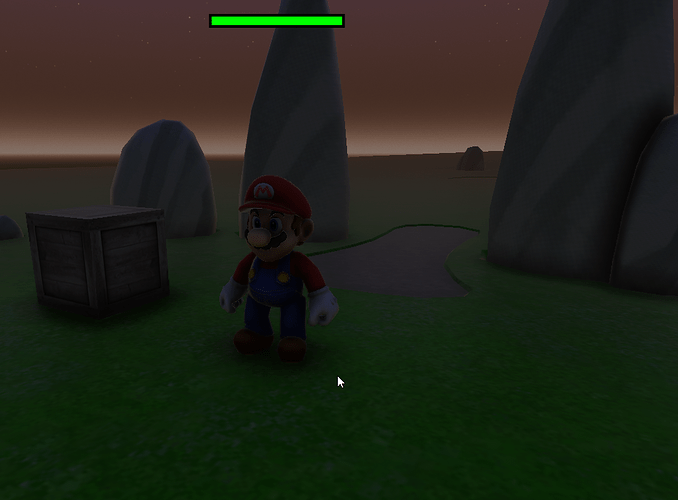

I have equipped the Ambient occlusion effect with an option that if the pixel is hit by light then the AO is reduced as function of the light intensity. At the beginning of the video, the sun is perfectly at the zenith and everything is hit by sunlight so it is as if the ambient occlusion did not exist.

without strong light on the surfaces the AO is more evident

Instancing is currently supported, but works differently. For a single object, the dedicated python component has to be activated and you need to choose the mesh to be instanced, and set the material parameters, finally you need to pass a list of tuples as (pos, ori, scale).

I had already thought about creating a plugin for Blender to automate everything by exporting a file that contains exactly these data for each object, but I have not yet done so. However, this data could be obtained directly from the Blender scene even with a simple script like this:

instancingData = []

for obj in instancedObjsList:

pos = obj.worldPosition

ori = obj.worldOrientation

size = obj.worldScale

t = (pos,ori,size)

instancingData.append(t)

Python!

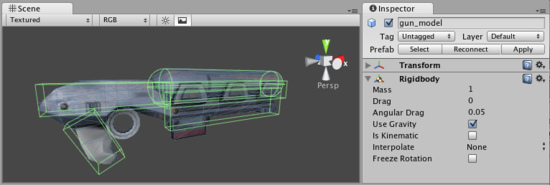

When you add an object/asset you can attach to it a script using this code:

myPythonComponent = lib.getLogicComponent("myPythonComponentName") #your custom python components are stored in your libs

pyComp = obj.pythonComponents.add(myPythonComponent) #now obj as your component attached

You can hook and unhook python components to objects dynamically during the game, but you can also hook them at init time by specifying in the asset definition script.