I need a bit of help figuring out the best way to composite a scene after using the 3D camera tracker. I’ve got drone footage of a house, and I decided it would be good practice to see if I could put a CG hole in the roof.

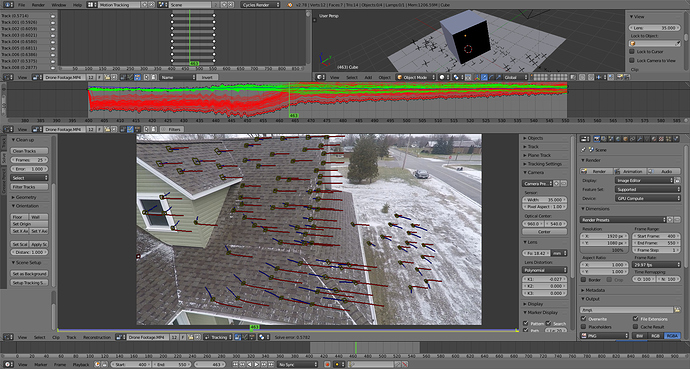

Image1: Pixel error of 0.57 after refining. I’m happy with that.

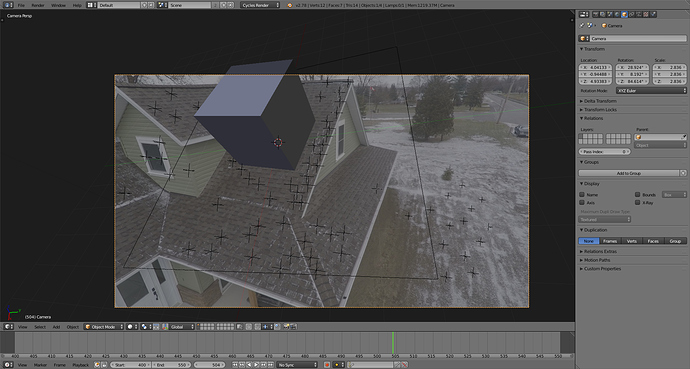

Image 2: Example object added onto roof.

Before I get to making the hole, I wanted to figure out a few problems I had while doing test renders. Any help or advice is greatly appreciated.

-

After pressing the “Set up Tracking Scene” button, the 3D viewport uses the original video as a background image from the camera’s perspective. This background image is used when I render the animation. How would I disable this background, so I am only left with the CG elements? My intention is to import into After Effects to composite since I havn’t learned Blender’s compositor yet.

-

I imported a fully textured bucket model I made, and put it in place of the cube you see in image 2. The bucket appeared partially transparent at render, as I could see the shingles of the roof through the model. Any idea what causes this? It doesn’t seem to happen with a basic cube.

-

I refined the tracking using the “Focal length, K1” option because this seemed to lower the average pixel error by the most. Now that the K1 value is changed, the clip appears distorted from the original. If I were to render only the CG elements, I would have trouble adding them on top of the original footage, since the original would not have this distortion. Any ideas? Am I approaching this wrong?