Hello, all,

I certainly hope I posted in the right place. Following up on HG1’s work making compute shaders and geometry shaders available to the public, I have been attempting to create a shader that I hope to eventually take into a realtime strand renderer following up on a lot of research that has been previously done on the topic.

Therefore, I’ve been trying to build on top of the GL_LINE_STRIP renderer that HG1 made, and create a triangle strip that renders a cylinder, based on positions of gl_in[index].gl_position, and some vector representing some adjusted normal length.

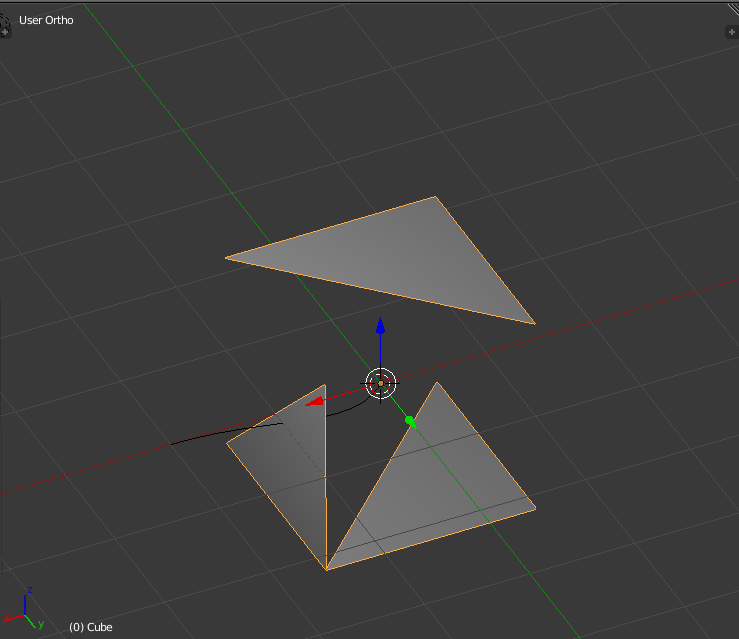

I seem to be getting the cylinders, but they are all screen-space oriented. As I understand, that is a result of the gl_ModelViewProjectionMatrix, but when I try to use strictly object coordinates (as I understand all coordinates are given in relative object coordinates in the vertex shader unless you multiply them by the ModelViewProjectionMatrix), I get the following:

<REMOVED BECAUSE I NEEDED TO ATTACH MORE IMAGES>

I’ve attached the blend file. The script that should render the cylinders based on the normal vector is called “MyGeometryShader” and the one where I tried to use strictly object coordinates is called “ModifiedGeometryShader”.

Sorry for any confusion caused by my poor explanation; I’m kind of burnt out looking at this.

Thanks in advance for your help.

EDIT 11/12/2015:

Okay, so I have a bit of the script working now. I ended up doing some research and decided to work on the part that seemed (to me) to need the most attention and that is getting the guide strands into the geometry shader, wherein I think I will have my largest set of work.

Currently, the way that the strand renderer works (as you can see in the updated file) is a game property sets what the name of the guide Bezier is (in terms of what it is called inside the blender scene, ie: the object name), and what the resolution to render the instanced bezier. Then a controller with the python script is linked to an always sensor on the desired object.

Inside the python script multiple things occur; own and cont variables are set up, as per the norm (for those of you not in the know, these seem to be very standard, almost implicit variables in the blender python scripting world: they specify the object and the current controller), a simple pass-through vertex shader is defined, and then the geometry shader.

Inside of the geometry shader there are two problems:

- max_vertices=value

- This is compile-time constant (perhaps because the rasterizer needs to have a predefined set of streams for the output of variables? Not too sure on the architecture, but I’m living with it, anyone who wants to correct me feel free). The fact that it is compile-time constant is very frustrating; it means that the eventual hair polys/ currently hair line strips that are being exported out cannot have a truly dynamic LOD. Optimally I would like to be able to dynamically change the value for max_vertices soas to be able to increase the amount of total exported vertices when the subject model is close to the camera and decrease the total exported vertices when the camera is far away without having to recompile the geometry shader for every frame or alternatively set the max_vertices value to a overly-high static value one-time. If there is a way to set the max vertices value to a uniform integer that anyone knows of, that would be great.

- guidePoint array length

- inside of the geometry shader script string I concatenate the string with the value from the owner’s game property “guideResolution” value in order to determine the array length. I think I might be able to set the array length dynamically using a shader.setUniform1i() call and then using the name of the set uniform as the value for the array size… only problem here is that now the amount of data that I’m trying to export can be variable but the total size i.e max_vertices is not dynamic…

The geometry shader just sets the gl_lineStrip color value to white and calls it a day. Fragment shader is nothing more than a mere pass-through. Most of this work is pilliaged off of HG1 and other astounding members of the Blender community.

Some optimizations I would like to make so far are:

I’m too newbie to know the best place to put the strings… I know it’s nitpicky but since I am not compiling the shader source I might as well not be building the strings for ever controller pulse. I should really move them to just before the glShaderSource() call, but having come from C# and a bracket-driven syntax to Python and a whitespace driven syntax I’m kind of worried about breaking the code I have… but that’s mostly because I’m tired, lazy, and feel mostly accomplished for the day.

Obviously actually creating hoses around the beziers… really not liking the look of gl_linestrip but it’s at least good for testing and showing off result data. I know now that my theory behind making this strand renderer is sort of sound.

Really wanting to make some attinuation, joint tree, constraints solver… maybe using the curent gravity of the BGE world but not sure. Maybe some people would like to have a game property…? Thoughts, questions?

Need to wrap my head around the shading model for strands… hair is different than grass, et-c. Not sure, once again, if I should use game properties for this, or just the blender material settings that are passed through the gl pipeline. Anyone experienced with integrating hacks like this have any ideas?

I would really like somebody to test this and see what they like and don’t like. Imagine the possibilities of scenes using huge amounts of instanced grass built off nothing more than a profile specified in game properties… getting way too ahead of myself.

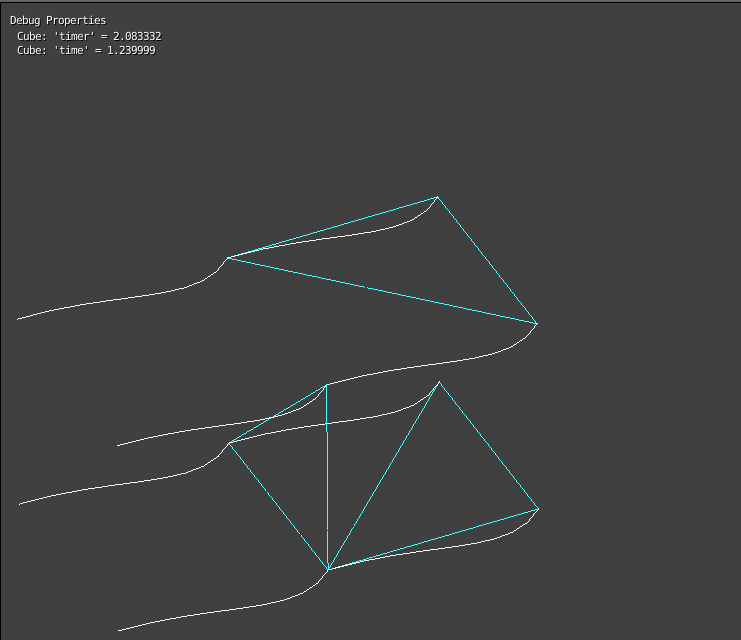

Anyhow, here’s what is getting rendered so far:

More to come!

Attachments

GeometryShaderExample_V1.3.blend (135 KB)