Hello Blender Bob,

nice to meet you. I am sorry to land like this in this thread but I can assure 100% that these movies were not done in ACES. I cannot confirm for every single of them of course, but hopefully you get the idea of what I am trying to say.

I used to think so and I has actually linked this list on my website but this list is completely misleading. In best case scenario, this link lists the movies that have been delivered/archived with ACES. But that´s all, they do not use the ACES Output Transform for instance.

This has been confirmed several times during the ACES Output Transforms Virtual Working Group of last year (the VWG is not finished yet). And some Technical Advisory Council (TAC) meetings as well.

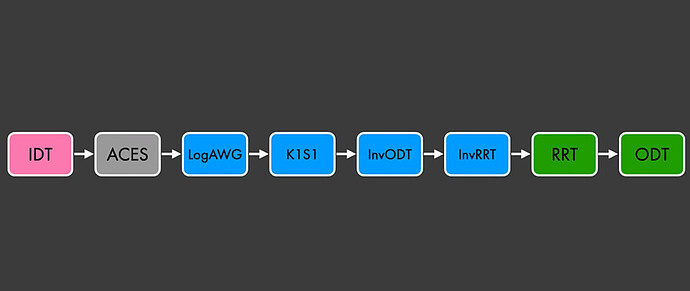

Here is the actual workflow used on most of these movies :

You have an IDT that brings your footage to ACES/ACEScg working space, then convert to ARRI Wide Gamut and finally Display with K1S1. The four last blocks on the left (InvODT-InvRRT-RRT-ODT) are what we call a no-operation. They cancel each other out.

I know that through my website I have unfortunately participated in this ACES trend but I have done my mea culpa here : https://chrisbrejon.com/articles/ocio-display-transforms-and-misconceptions/

I had the chance to talk with a few colorists/color scientists working in Hollywod. Their advice is generally the same : do not use the ACES Output Transforms.

Hope you enjoy the read,

Chris