Hello, here is a little tutorial.

I feel that I already have to apologize, I made this little script and thought I’d explain it but it turned out to be quite long. I might be doable in a much simpler fashion but I like to do things myself. A bit math heavy and hard. You can always just download the .blend and try to understand it. You have been warned.

In my game I wanted to mark a spot on the ground behind some objects. Mouse over any sensor have no property filter so I looked at the new Rasterizer.getScreenVect() function. It was not as straight forward as I hoped but not that hard. All it takes is a little vector math.

getScreenVect did not, as I hoped it would, take coordinates on the screen and made a vector out of them. So I thought that I could use the mouse coordinates to find coordinates on the ground under the mouse. This only works for flat surfaces (it can be extended to cover more but that is a lot of work)

Okay I feel that I have to explain what a vector is, a vector is usually described as force and direction (english is not my first language so some of the terms might not be the standard). In 3d space which we will be using in blender it is represented by a x,y,z value. A vector can exist in any number of dimensions but 2d and 3d are most useful for game programming. The x,y,z values is the force/movement the vector has along the x,y,z axis. A vector has no position. They are graphically represented by arrows, pointing in to the the x,y,z coordinates they have.

Back to getScreenVect, it makes a vector in the direction in 3d that hit everything “behind” a coordinate on the screen. It does not take x,y coordinates as I hoped. It takes the ratio of the coordinate. So 0.5,0.5 is the centre of the screen, 0.0,0.0 is the top left corner, 1.0,1.0 is the bottom right. But we can get the screen width and height so it isn’t a real problem. “Sens” here is a mouse movement sensor

cont = GameLogic.getCurrentController()

own = cont.owner

import Rasterizer

sens = cont.sensors["sensor"]

x = sens.position[0]

y = sens.position[1]

h = Rasterizer.getWindowHeight()

w = Rasterizer.getWindowWidth()

X = float(x)/float(w)

Y = float(h-y)/float(h)

The reason for the float() functions is because python does not like to divide integers. And the reason for h-y is that the functions starts to count from the opposite sides I think. It could be written as

1-float(y)/float(h)

Then we put that in the function

vect = Rasterizer.getScreenVect(X,Y)

Okay now we have the vector that hits everything under the mouse cursor. Now I want it to hit the ground, to do that we need some more vector maths.

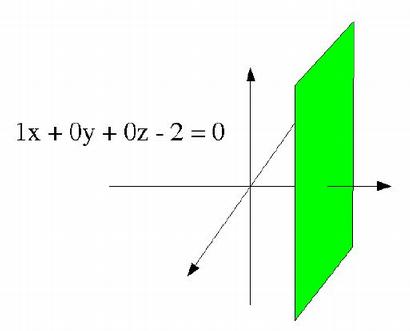

A plane can be described in two way, either with parameters with is very messy. Or with normals. A normal is a vector pointing away from a face in a right angle (90 degrees or Pi/2 radians).

We can describe a plane as an equation, and every set of x,y,z coordinates that fits the equation is part of the plane.

If we put the point 4,2,5 in the equation we get 4+02+05-2=0, or 4-2=0 which is not true, so the point is not in the plane. 2,6,1 then? 2+06+10-2=0, 2-2=0 yes. If we take away the coordinates with a 0 (since they will always be 0) we see that all is left is x=2. Every point with x-coordinates 2 is in the plane (if the plane is infinitely wide). The normal of the plane is the x-axis, or (1,0,0) and -2 defines where on the a-axis the plane is.

If we put the point 4,2,5 in the equation we get 4+02+05-2=0, or 4-2=0 which is not true, so the point is not in the plane. 2,6,1 then? 2+06+10-2=0, 2-2=0 yes. If we take away the coordinates with a 0 (since they will always be 0) we see that all is left is x=2. Every point with x-coordinates 2 is in the plane (if the plane is infinitely wide). The normal of the plane is the x-axis, or (1,0,0) and -2 defines where on the a-axis the plane is.

How does that help me? I know my ground has the normal (0,0,1), the z-axis, because the normal of the ground would point to the sky. And my plane lies at z=0 so my equation would be: 0x+0y+z=0, or z=0. Every point with z coordinate 0 is on the ground. So we have a vector which describes the direction from the camera, and we have a plane. We want to know where a line with the vector as direction crosses the plane. So if think of it like this, how many “steps” do I need to walk from the camera in the vectors direction to end up in the plane? only the z-direction matters in this case so if we want the cameras z-position plus n (the number of steps) multiplied with the vector to be in the plane, then camera.position[2] + n*vect[2] = 0, if we use some algebra

cam = cont.sensors["sensor2"].owner

n = -cam.position[2]/vect[2]

(sensor2 is a non-pulsing always sensor connected from the camera) Now we know our n, if we now want to place an object on the ground. We simple add n*vector to the cameras position.

own.position = [cam.position[0]+n*vect[0],cam.position[1]+n*vect[1],cam.position[2]+n*vect[2]]

The full script (put on the marker object with sensor2 coming from the camera)

cont = GameLogic.getCurrentController()

own = cont.owner

import Rasterizer

sens = cont.sensors["sensor"]

cam = cont.sensors["sensor2"].owner

x = sens.position[0]

y = sens.position[1]

h = Rasterizer.getWindowHeight()

w = Rasterizer.getWindowWidth()

X = float(x)/float(w)

Y = float(h-y)/float(h)

vect = Rasterizer.getScreenVect(X,Y)

n = -cam.position[2]/vect[2]

own.position = [cam.position[0]+n*vect[0],cam.position[1]+n*vect[1],cam.position[2]+n*vect[2]]

If you want a leaning ground will have to find out its normal and a new calculation for the n.

Okay not the simplest of tutorials, don’t feel stupid if you don’t understand it.

Attachments

vector.blend (195 KB)