How can I combine (merge) two Normal output (not Normal Map, and not with LERP)? Is it possible? If possible, I want learn with its mathematical base.

What do you mean by merge?

Normals are just vectors so look into vector math.

Daniel Shiffman’s Nature of Code is good introduction to math as it applies to creating images.

If you want the average of the vectors, lerping is fine, just normalize afterwards if you want a normal vector. The vector c that bisects two vectors a and b is = (a+b)/2, the half vector of a and b.

Adding two vectors is just adding the components and can be done with a vector math/add node. Multiplying or dividing a vector to scale it, like in the half vector above, is just multiplying or dividing all components (can be done with a MixRGB node, but for some reason there’s no vector scale in vector math.)

I’ve had a lot of fun playing with a custom rotateAxisAngle node that I made to rotate vectors around arbitrary axes; that might be something you want instead. I used https://en.wikipedia.org/wiki/Rodrigues’_rotation_formula to build it. You have to make your own cross product node out of basic math though, because Blender’s cross product isn’t really the cross product, it’s the normalized cross product, which you don’t want.

Thanks. But no, not with LERP or Add. I want combine perfectly, not want average of vectors.

And thanks, I know rotate a vector.

Average of vectors is the vector that perfectly bisects two vectors. For the case of unit length vectors.

No, this give me mixed result and this is invalid, I want layered result. Second normal must transform to First normal.

No. This is Merge Normal Maps. I want merge Normal vectors.

It’s the same math (a simplified quartenion multiplication)! the only thing it needs is to remove the scalings from [-1,1] to [0,1] and vice versa.

How? Can you tell me more clearly?

Show me what you’re doing. Nodes and inputs and meshes. The language here is unclear: “merge”, “combine” are not specific terms.

Then think about what you’re doing. You want to start with two vectors that represent a rotation off a normal to start with? (Rotation off unmodified normal, possibly true normal.) And then you want to add those two rotations? You can do that. The axis of your rotation for each vector is the cross product of the original normal and the new normal. The magnitude of that rotation is the arccos of the dot product of those two vectors. You can start with your original normal, rotate it to the position of the first modified normal, then rotate it again according to the transformation represented by the second modified normal.

The very first thing done in that link, line 1 (and 2) of the code, is to convert normal map lookups into normal vectors. “tex2D(texBase, uv).xyz*2-1” is lookup up the color, multiplying it by 2, and subtracting 1 to convert the lookup into a tangent space vector. If you’re talking about starting with normals instead of normal map lookups, all you have to do is skip that transformation, as you already have normalized vectors in the -1,1 range.

Thanks. I saw HLSL code now. I will investigate this code and your tells.

here’s an old nodegroup for rotating vectors with quaternion multiplications…

quaternion_rotation.blend (613.7 KB)

with it you can rotate a vector around an axis (another vector), by some angle.

I have another (simplified version) that just rotates the normal vector around some tangent vector… it’s pretty straigthforward: [cross(N, Tg) * sin(angle)] + [N * cos(angle)]

Thanks. But quaternion rotation node is too cost.

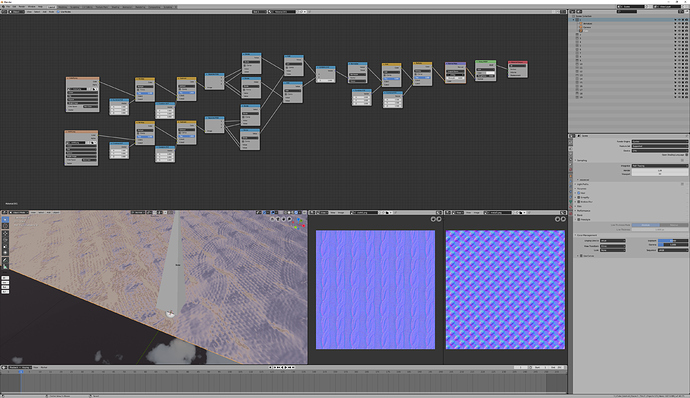

This method is not work for Blender, because Blender’s normal type is different. This code works with normal maps but not work with normal values (I removed color to normal conversion).

float3 n1a = texture2D(base_map, uv).xyz;

float3 n2a = texture2D(detail_map, uv).xyz;

float3 n1 = float3(n1a.z, n1a.x, n1a.y); // Convert from Blender normal type

float3 n2 = float3(n2a.z, n2a.x, n2a.y); // Convert from Blender normal type

// We have to 180 degree rotate on the Z axis for convert from Blender normal type.

float3 n = n1*dot(n1, n2)/n1.z - n2;

But not work properly. Result is “Blender Object Space Normal Map”, not Normal and not properly. And if I need to add new operations, then this will be too cost, especially for Eevee.

I took another look at the code-- sorry, it does need to happen in tangent space, because there are some things specific to the normal.

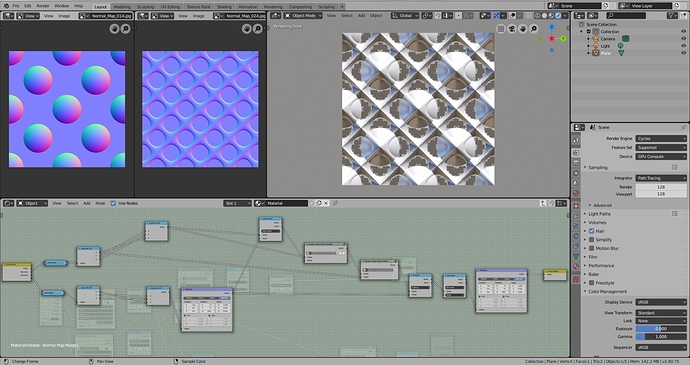

But I went ahead and recreated it (the “partial derivative blending” version.) Looks all right to me, especially considering the sources I used don’t look too hot. Scaled the normal map strength to eye.

As always with tangent normal maps, you need to pay attention to your tangents. This plane was built world Z-up, with +U pointing in world +X-- ie, the orientation where tangent space = object space.

If your goal is not to actually combine two normal maps in some way, but to instead combine two normals, I’m afraid that doesn’t make much sense to me, and I would need a lot more explanation about the end-goal. These combinations depend not just on two normals, but additionally on a normal and a tangent. Because what we’re actually doing is combining the difference between normal1/normal2 and normal1/normal3-- your mesh normal plays a role. (And a tangent is involved in the process, because we’re evaluating in tangent space so that we can know that blue/z means the in the direction of normal1.)

Also: don’t rotate world space normals 180 degrees to convert. The most common thing you’d need to do to convert is to invert the green channel, while it’s still a color and not a vector. But there are a lot of things in your node group that don’t make a lot of sense to me, and it doesn’t seem to represent any of the code that I can see (none of which involves a dot product.)

Thanks, but this is “Merge Normal Map”, but I need “Combine Normals”, so I need combine “Normal” outputs (Purple sockets). I only show an example with Normal Maps, bu not needs use Normal Map, it have to works with all “Normal” outputs.

“Invert Green” not works with “Normals”, only works with “Tangent Space Normal Maps”.

I don’t know this is possible, but I research this thing.

What that does is merge two normal maps with one normal vector (your mesh normals).

That’s part of why I’m confused by you talking about merging two normals.

If you had two maps of world space normals, you could combine them with each other and with the mesh normal, although it’s complex and slow. It is possible to make a world space to tangent space node, which would let you plug world space vectors into the above node group. Again, complex and slow, and you’ve already indicated you’re not interested in anything with those qualities.

If your goal does not involve normal maps, can you show me what your goal is? Where are your two normals coming from? What are they, how are they generated? Are you actually wanting to combine them with a third normal, like a mesh normal?

Thanks. Yes, I know this method but this is very cost. I want direct combine.