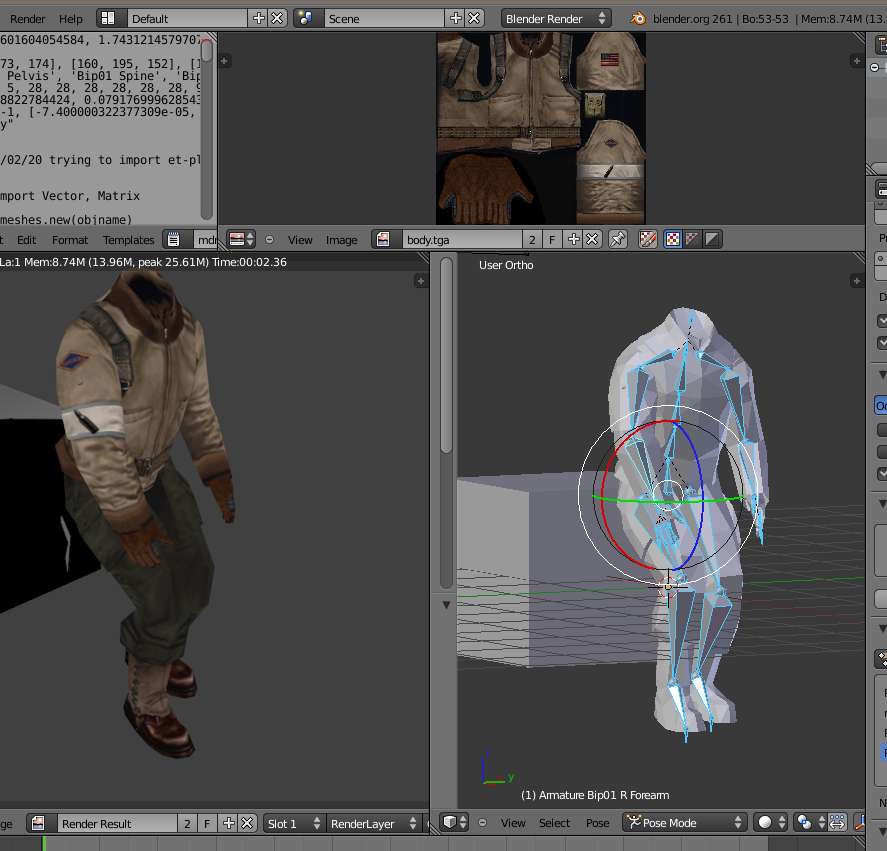

one small example how i use the export-to-md3 tool

to automate the things.

# test-dr /april-2012 -- need to automate this, (--for blender2.61--)

# cause every time some selected manual settings are missing after changes

#

# !!URGENT!! because this uses objects from different layers

# !! all those layers have to be activated or the objects will not animated and

# the export runs with no animations

#

import bpy

import os.path

import io_export_md3

#create an object with its first settings to be used for export

#MD3set = io_export_md3.md3Settings("w1/t.md3", "tt", "tlog.log")

# and

# this simply saves the settings for the active selected objects to an md3-file

#io_export_md3.save_md3(MD3set)

# things a need to set are, first select the objects to be used,

# then adjust the frame-ranges and set

# savepath = output-filename

# name = internal-md3-name (used for what?)

# scale = scaling factor

md3_file_contents = {}

def create_md3file_entry(md3filename, scaling, startframe, endframe, offsetobject, objectlist):

md3_file_contents[md3filename] = [ scaling, startframe, endframe, offsetobject, objectlist ]

def process_md3file_entrys():

for md3filename in md3_file_contents: #loop through all keys

print("output to:", md3filename)

print(md3_file_contents[md3filename])

scaling, startframe, endframe, offsetobject, objectlist = md3_file_contents[md3filename]

for obj in bpy.context.selected_objects:

obj.select = False #set any selected objects to false (un-select)

for obj_name in objectlist: # now select the objects to be exported to md3

bpy.data.objects[obj_name].select = True

#if bpy.data.objects[offsetobject].type == "EMPTY":

if startframe > bpy.context.scene.frame_end: #then set first endframe

bpy.context.scene.frame_end = endframe

bpy.context.scene.frame_start = startframe

else: # its in the possible range to be set

bpy.context.scene.frame_start = startframe

bpy.context.scene.frame_end = endframe

bpy.context.scene.frame_set( startframe ) #set first frame

bpy.context.scene.update() # and update settings ... and then get the possible offset of another object

if offsetobject == "center":

offset = [0., 0., 0,]

else: # use the objects world-location

offset = bpy.data.objects[offsetobject].matrix_world.to_translation()

md3name = os.path.split(md3filename)[1] # get the last part of the output-md3-file

md3name = os.path.splitext(md3name)[0] # remove the extension and use this as default inside the new md3

MD3set = io_export_md3.md3Settings(md3filename, md3name, md3filename+".log")

MD3set.name = md3name

MD3set.savepath = md3filename

MD3set.scale = scaling

print("subtract offset:", offset) # for visible compare, ... before the scaling is set

MD3set.offsetx = -offset[0] * scaling

MD3set.offsety = -offset[1] * scaling

MD3set.offsetz = -offset[2] * scaling

if startframe == endframe: MD3set.oneframe = True

else: MD3set.oneframe = False

# do the export

io_export_md3.save_md3(MD3set)

del( MD3set ) # delete the class-object to start with an empty, fresh one

# outputfilename -scaling-factor - framerange -- offset-object(empty or mesh itself) or "center" for 0,0,0

# carefull and always needs checking, the offset object may be the wrong one,

# for the main-weapon-body in game set to tag_weapon its only tag_weapon if at center loc tag_weapon is too at center

# and naturally the mesh too

layers_status = []

frame_status = {}

frame_status["frame_start"] = bpy.context.scene.frame_start

frame_status["frame_end"] = bpy.context.scene.frame_end

frame_status["frame_current"] = bpy.context.scene.frame_current

for i in range(20):

layers_status.append( bpy.context.scene.layers[i] )

bpy.context.scene.layers[i] = True

create_md3file_entry("w1/w1_ak47_body.md3", 32, 1, 70, "center", [ "a_skeleton" ])

create_md3file_entry("w1/w1_ak47_animation.md3", 32, 1, 70, "center", ["tag_body", "tag_weapon", "tag_clip", "tag_barrel"])

create_md3file_entry("w1/w1_ak47_single.md3", 50., 90, 90, "center", ["ak47_skin1", "ak47_skin2", "ak47_clip_skin", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_ak47.md3", 32., 90, 90, "center", ["ak47_skin1", "ak47_skin2", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_ak47_clip.md3", 32., 90, 90, "tag_clip", ["ak47_clip_skin"])

#only one nade-type-object, is used for nade, smokenade, flashnade too

create_md3file_entry("w1/w1_nade1_single.md3", 50, 190, 190, "center", ["Nade_Ico"])

create_md3file_entry("w1/w1_nade1.md3", 32, 190, 190, "center", ["Nade_Ico"])

create_md3file_entry("w1/w1_nade_body.md3", 32, 100, 170, "center", [ "a_skeleton" ])

create_md3file_entry("w1/w1_nade_animation.md3", 32, 100, 170, "center", ["tag_body", "tag_weapon"])

# for the m4 i did adjust the clip-location and then its not tag_clip.

create_md3file_entry("w1/w1_m4_body.md3", 32, 300, 370, "center", [ "a_skeleton" ])

create_md3file_entry("w1/w1_m4_animation.md3", 32, 300, 370, "center", ["tag_body", "tag_weapon", "tag_clip", "tag_barrel"])

create_md3file_entry("w1/w1_m4_single.md3", 50., 390, 390, "tag_weapon", ["m4_skin", "m4_clip_skin", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_m4.md3", 32., 390, 390, "tag_weapon", ["m4_skin", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_m4_clip.md3", 32., 390, 390, "m4_clip", ["m4_clip_skin"])

create_md3file_entry("w1/w1_m16_body.md3", 32, 200, 270, "center", [ "a_skeleton" ])

create_md3file_entry("w1/w1_m16_animation.md3", 32, 200, 270, "center", ["tag_body", "tag_weapon", "tag_clip", "tag_barrel"])

create_md3file_entry("w1/w1_m16_single.md3", 50., 290, 290, "tag_weapon", ["m16_skin1", "m16_skin2", "m16_clip_skin", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_m16.md3", 32., 290, 290, "tag_weapon", ["m16_skin1", "m16_skin2", "tag_brass", "tag_flash"])

create_md3file_entry("w1/w1_m16_clip.md3", 32., 290, 290, "tag_clip", ["m16_clip_skin"])

create_md3file_entry("w1/sm_shell.md3", 32, 0, 0, "center", ["shell"] )

# at frame 93 the flash is at center-position (parent-constraint influence = 0.)

create_md3file_entry("w1/w1_ak47_flash.md3", 32, 93, 93, "center", ["f_machinegun"])

process_md3file_entrys()

for i in range(20):

bpy.context.scene.layers[i] = layers_status[i]

bpy.context.scene.frame_start = frame_status["frame_start"]

bpy.context.scene.frame_end = frame_status["frame_end"]

bpy.context.scene.frame_start = frame_status["frame_start"]

bpy.context.scene.frame_set( frame_status["frame_current"] )

bpy.context.scene.update()

this is, because it happened often to me i had not all necessary layers selected

and/or did not set the correct scaling-factor and then ended with not-working md3-exports and some errors were only noticed if those models were seen in a game-run.

Like visible from the other videos in this thread, the models are only for my tests of the working coding, therefore those blend-files are not in the svn-repository.