I wonder how good it will render in cycles!

Send me one, I will test and tell you that

That’s funny!

Wow! That thing is expensive!

Nvidia is a cancer lol

10 years ago you paid 699 USD for the top end graphics card. Today is 3000. Is this inflation or just people are willing to pay any price?

A.

Now you can make money with your GPU ( GPU rendering, gpu acceleration for your professional softwares, mining, neural networks…). 10 years ago you mostly bought a GPU to play games.

Maybe market rules and lack of serious competitor.

10 years ago minimum wage was 7.25 dollars… Today… its 7.25

also 3 days ago you could buy a top of the line GPU for 699 lol

They need to move this out of the geforce line of products and call them Edison cards or something xD

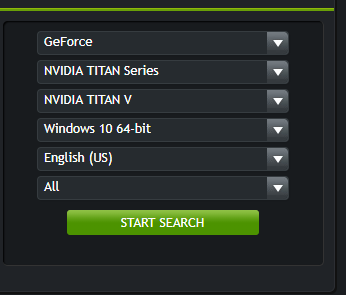

nVidia said this is not a consumer card. So 3000 is not that much… I think

The Titan V is optimized for 4X4 matrix operations. 4X4 matrix math is the basis of most neural net training and processing. It has graphics ports because it’s most likely to be used in developing deep learning code on workstations. It’s price vs hashrate makes it a poor choice for mining. Absent any direct testing with Blender, I’d guess that you’re better off with a brace of 1070-1080 cards than one Titan V.

You paid that much for the top-end gaming GPU. Top-end professional GPUs cost thousands back then, just as they do now.

This card has almost all the features of the 10K$ Tesla V100 at slightly lower performance. It’s actually good value if you can make use of Tensor Cores (4x4 fused-multiply-add) for Deep Learning.

For raytracing, I don’t see many opportunities for using Tensor Cores. Going by specs, it’ll probably be about 50% faster than a 1080Ti.

They already did that a while ago.

Sure they did

Titan GPUs are expensive to start with. 3000 is not a surprise.

Well i dont think its aimed at games or crypto miners, nor for render streets, but rather for neural networks.

With cards like that one can drive a car, powered by a neural network and lidar radars.

But… its maybe a strange direction because in the end, such cars wont use graphic cards.

$3000? I hope it is faster than 4 1080ti or 6 Vega 64 cards.

I read a quote from a dev working in machine intelligence. He said that his company will use the cards in developer workstations because it can do the needed math without needing a separate graphics card to run the display. Considering the sums being thrown at AI, the price of the card is inconsequential.

Artists not understanding the target audience of a new piece of expensive technology? I’m shocked!

Also, $3000 is chump change to a company positioned to leverage this kind of processing power.