With this early version and these few features this is unbelievably useful already! Thank you so much. Working with images sequences is pretty tedious, and the real time compositor isn’t as readily available or flexible or fast for 2D assets. Also inaccessible for those still using previous versions of blender is

I will try to list ideas or feedback as I come up with them given you are open to suggestions and come back here to post them in case they are of any use for you. Sorry for the long message in advance, I will write down everything I thought it would be possible with a viewport based compositor given the functionality. I imagine some of this stuff might not be viable, but I will put them in here just in case you know of any open libraries that already have some of these things solved and it becomes a possibility.

But at a first glance the first thing that came to my head in regards to the VFXs is to have simple and quick keyframable basic effects that are used every day in 2D to adjust directly in the viewport

For example a gradient with blending modes, with linear or radial distribution, selectable control points that could be keyframed, maybe keyframable hues. Similarly to what it’s done in any compositor like AE or Resolve.

Another important for 2D workflow could be to be able to import several sequences/videos from folders and load them in the order selected with their proper hierarchies without having to fiddle in the viewport to get them all aligned and spaced at 0.0001 from each other, and then move them elsewhere if necessary

Maybe if there could be a way to import them and fit them in front of a camera (centered, stretched, fill vertically, horizontally, etc). You select the camera you want, it snaps in front of it at a selected distance (slider), maybe with an offset setting to keep all the layers fitting the camera viewport but moving farter away from it and scaling up the sequence/video to accomplish this. There could be a setting to allow a grou of layers to follow the camera markers and change parents dynamically, or to just include the cameras you want the sequence to play in from a list and exclude others

That’s getting a lot more complicated but I’m thinking in ways to replace a lot of the basic functionality of compositors as much as possible. It’s very tedious work to get sequences out of drawing software, then to blender, then back, then to a compositor, etc

Another more simple effect could be glow with threshold settings, radius, intensity, etc just like AE. Imagine being able to import a .PNG sequence of a character, then having another PNG sequence of a fireball, then another PNG sequence of the darker details of the fireball and being able to create a glow effect on each one of those fireball layers independently, changing the blending modes even so it looks better

In combination with the ability of keyframing a gradient and changing the brightness and Hue you could adjust in the fly a lot of the things usually done outside of blender

Another thing that would help on a scenario like that is something I saw your Grease Pencil addon. If you are able to reproduce the extrude inner/outer shadow effect with a 2D Shader with blur controls that would be amazing. The normal generation seems kinda useful in some scenarios but I don’t see how converting hundreds of 4K .PNG sequences into Gpen strokes/fills to generate meshes to get normals to get that effect would be viable, but I don’t know much of this so I imagine there’s tricks to get that kind of look from 2D shaders

Something that would give a better result than generating a flat shadow from the border of a PNG/video or an automatic mesh/normal generation could be having a way to select a curve to generate the shadow profile. If you wanted something more flat like the anime movie Promare, you have a flat curve and just position it in X,Y axis of the texture (maybe another control point to keyframe?), and if it’s something a bit more complex, like a car you could move a spline automatically projected on top of the video/sequence plane and the VFX could automatically fill the area under spline or over it (selectable by a toggle)

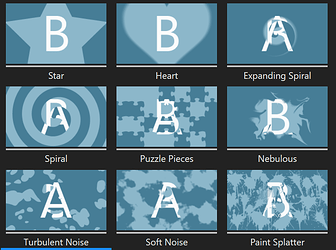

Most common effects I’ve seen are gaussian blurs, camera lens blur type with gain controls, glow with different blur shapes like equirectangular/radial/. You could get really creative with this, imagine having a inverse square fallout of the blur effect so it looks natural and soft instead of an airbrush, different tints and gradient colors, chromatic aberration, masking, 16 to 32bpc, etc.

I don’t know if you are familiar with an AE paid plugin that was very used a while ago called Starglow, it had all these glints and glow effects that were used for video editing that look amazing on animation hand drawn vfxs but it’s something you usually only see at the end of the pipeline so it’s not so fun to work without it. Maybe there’s some documentation out there on how to achieve these

Of course you need the typical Pixel/VHS/Retro effects. There’s a lot of nodes to apply it to geometry and imported images. But I don’t know how that would behave with these layers and if everything is self containing it would play a lot better

I think one that might be really good but almost always forgotten is film grain. Not just noise with a simple blending mode. For what I’ve seen it asks for a lot of resources in compositors but maybe blender is different. I’ve seen filters in the live compositor to be x100 more performant than with the regular compositor after they started using the GPU so maybe there’s a way to get a simpler and decent half realistic film grain preview in real time

Color wheels, I didn’t put them first but I think it’s what I use the most, along with brightness, exposure, hue, tint, temp, black/shadow balance, white balance. When managing tons of layers is difficult to maintain consistency of colors in a scene, working with these in the viewport will make it a bit more challenging and will require another export eventually to merge everything together properly. But I think that with an easy and quick way to control color balance of the video/textures this is mitigated by a lot

One of the most tedious things in blender is to set up node groups to tint objects, change colors, gradients, maintain emissions with these colors and textures, control the masking and position of such emissions/glows. If this is done directly it will save a lot of people hundreds of hours of just interacting with the node editor for basic things that should be in a slider by default

And the last thing I can think off the top of my head is having a way to generate god rays/shine effects with some sort of masking that takes into consideration maybe a point projected in the plane of the video/sequence and the position of the camera?

It seems hard to do if it has to take into consideration layers in front of it. Instead of being a light based effect, it could do just like these video compositors occluding with masks some glow effect that has a gradient ramp and a noise texture with a velocity setting. Also not having to deal with fog/volumetrics would be faster

Here’s the rest of the things I can think of at the moment:

- Radial/zoom blur with keyframable radius

- Directional Blur with same controls

- Fish eye lens (Eeve not having this by default has been very inconvenient)

- Some sort of minimum/maximum to control the thickness of imported linework .PNG sequence layer

- Transparency control with masking with geometric shapes or using the position of a gradient in the plane. Imagine importing a hand drawn smoke sequence and you can pick the blurred radius around a circle or rectangle projected into the video/sequence to create extra transparency effects

- Always on top/Always on bottom toggles (no idea how this would even be possible but just in case)

- Use layer on top or bottom as Alpha Matte/binary mask

- Booleans??

- Harsh cartoon blur/motion lines/smear with spline control (this one is just crazy, but again, in case it gives you any ideas or if there’s something out there)

That’s about all I can come up with