Hello,

I just shooted the place outside my window and I tracked with Blender.

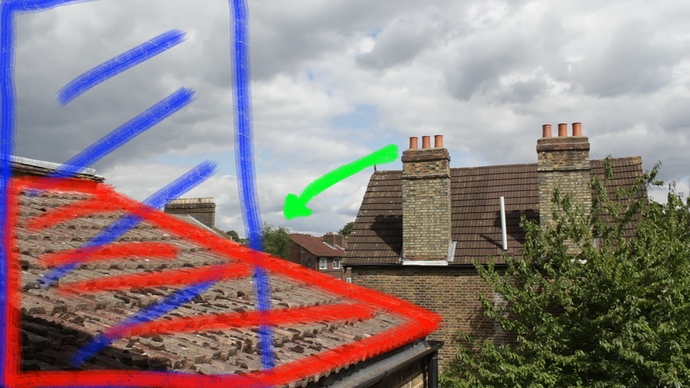

As you can see from image, it’s a simple shoot with a lot of good tracking spots (the green arrow suggests the little and slow movement of the camera, while the blue area suggests what will be outside frame at the end of shot), in fact I tracked and solved the camera with a very low error, something about 0.3.

While everything is nice in camera, actually the trackers are not positioned as they should be in reality.

I mean, trackers on the near left roof (red area in the image) are far from other ones.

So I’d like to know how I suggest Blender which tracker is near and which one is far, or, better, how I scale tracker according camera, because when I select one tracker, the camera is selected as well and I cannot scale that tracker without scaling all, that’s, of course, because I need tracker to position objects in 3d space.

Thank you in advance.

We need more info to help you. ie: what camera did you shoot with, cam settings, camera data used for the solve, blend file, & raw footage if possible.

Often, when the real scene does not match your markers, it means that your camera data for the solve is bad, and the error is deceptively low…

Also check the camera lens length again as it may report incorrectly with limited parallax to go on.

In the Solve tab of the tracker, you’ll see some Orientation settings. There you can select two tracking markers and enter the real-world distance between them. This then scales your scene accordingly. Another issue which could also play a part - especially near the edge of the frame, is lens distortion. Unless you undistort your footage, the empties generated from the markers won’t quite match the tracking points.

Hello guys,

sorry for the delay, but I was very busy since that moment.

Well, I remade a new tracking, again having very low error, 0.11, and camera lens near the one I used, 24.03mm, but trackers in blender don’t correspond to real position in space.

I shot the plate with a Canon 60d, I put the sensor width 22.3mm and aspect ratio 1.0, focal length 24mm. I set the distance between two markers, but, again, while the tracking is really good, the trackers are very solid on image, they don’t reproduce the real position/distance from camera as you can see here:

First off, based on the screenshots it looks like you need to set origin, x (or y) axis orientation, and most importantly scale.

Second, you really need to pay close attention to defkon’s message regarding undistortion.

Blendercomp,

the problem is the empties in the red circle should be at less than 4 meters from camera, while green trackers are more or less ok (even if the three low left empties should be more far than actually are!!!).

Again, the problem is not the empties don’t follow the trackers position in sequence in the viewport, I have already undistorted the plate, so they follow the image.

What I mean with “real position” is the distance from the camera when I shoot: the tracking and camera solving is good and stable, but trackers work only on 2d space, their depth/distance from camera is totally wrong.

How can I fix that?

Forget about the scale and axis orientation, it has nothing to do with that, viewing from the camera the empties/trackers look good, viewing from side they are in the wrong distance from camera.

Is it more clear?

I would guess that your camera is not moving enough (minimal perspective change) and Blender is unable to calculate where those points should be.

We’re on the same page here regarding the problem analysis.

Considering that .3 is an almost perfect camera solution, this obviously suggests the lack of sufficient parallax and/or depth data. The tracker cannot differentiate foreground from background markers.

Forget about the scale and axis orientation, it has nothing to do with that,

well, actually scale has everything to do with it and so do origin & orientation, especially on such a shot.

You could experiment with a different number of things. I’d pick a foreground marker and a background one, specify a real-world scale (which would be in the range of several meters/blender units), apply that, and see what happens. You could also try scaling up the whole 3D scene.

viewing from the camera the empties/trackers look good, viewing from side they are in the wrong distance from camera. Is it more clear?

that has been clear all along ![]()

May I ask if you shot this yourself? If so, how about shooting it over again with enough perspective shift?

If not, what exactly do you want to accomplish? If it works so well for 2D purposes, do you really need 3D? Are you planning on integrating CG elements?

Blendercomp, as well as smm, you are suggesting something similar a friend just told me some days ago and I think you’re right and it could be a possible and working solution, I mean to re-shoot again but with a wider panning movement of the camera.

And to tell more, this friend suggested me to do some color correction before tracking, because an uniform lighting could alter the understanding of how far is a point by the camera solver.

Blendercomp, of course you can ask!  I shot the entire sequence, the purpose is to delete the left cimney (something I already did in comp) and recreate one to destroy (and render) in Houdini, then, yeap, I have to integrate CG elements, so I need to have empties with a distance from camera very similar to reality. About the scale, of course I scaled the empties giving measurement taken from the roof near my window, but that didn’t change the empties relative-to-camera positions: if they are in the wrong place, they are in the wrong place even with the right scale, but I’m sure you already know that.

I shot the entire sequence, the purpose is to delete the left cimney (something I already did in comp) and recreate one to destroy (and render) in Houdini, then, yeap, I have to integrate CG elements, so I need to have empties with a distance from camera very similar to reality. About the scale, of course I scaled the empties giving measurement taken from the roof near my window, but that didn’t change the empties relative-to-camera positions: if they are in the wrong place, they are in the wrong place even with the right scale, but I’m sure you already know that.

So I’m planning to reshoot in the next days and I will let you know.

Thank you again for your time and suggestion, I hope you could understand my (little) bitterness, because, while I’m watching a lot of funny movie on youtube where everything is tracked and works and there are even sequences shot with a mobile camera, my sequence doesn’t work although I thought to shoot it in the most simple and right way a motion tracking artist has always dreamt of!

Don’t forget that the large pan doesn’t have to be used in the finished shot just the solve process to reconstruct the geometry of the scene. So get a few frames of big pan then shoot your shot with minimal movement. Place your keyframes at the time of the big pan.

excellent point!

In TMB Sebastian suggests even taking photos of the scene, it’s worth looking into that

thinkinmonkey: it all depends on your objectives. If all you’re after is an explosion with minimal camera movement, maybe there’s no need to shoot and track it again.

Create 3 masks, one for the foreground roof, one for the tree (kinda middle-ground), and one for the bg house with the chimney. Using the masks, place each element on its own render layer, and render out 3 the image sequences with alpha. Then in the 3d viewport place the bg layer way back. That’s relatively easy to setup and test. Please bear in mind that you’re not really after reconstructing the geometry of an actual scene, all you’re doing is faking something. Depending on the circumstances, you might get away with less work. Just my .02$

To be honest thos is what I normally do. I use blam to get the right lens length and add a bit of geometry for masking purposes. Of course thats overkill too. As blendercomp suggests keep it as minimal as possible especially if there is no apparent depth changes. And don’t forget that you can use plane tracks too that will distort to follow the shapes you choose.