The developers of the demo said there was plenty of cpu overhead available to make game states and run game logic etc. So in theory they could have made a game if they wanted. Now as for the 60FPS. Epic was targeting 30FPS on consoles with nanite and Lumen which are pretty heavy overall, I doubt we are going to see 60FPS with those two technologies on console without a lot of optimization. Cyberpunk is not using anything approaching those two techs so it makes no sense to compare the two. Cyberpunks looks good because of art direction not necessarily because they have some amazing tech.

Another big challenge for UE5 is the fact the affordable end has stagnated when it comes to buying a GPU (rising prices have meant no meaningful advancement in price/performance since Pascal). Barring a price crash, it will be years before UE5 users can confidently make a title designed to run at 60 frames per second.

As far as I know, the only way for gamers to get prices back down anytime soon is not to play (as in do other hobbies that do not involve heavy computing tasks, like playing board games, gardening, or wood working).

I’m not sure a game would run great without further optimization (they said they will be optimizing physics).

Adding IA, more physics could be worse, many times the demo slows to 20 or 25fps.

Again 30 fps is a no go for lot of players, perhaps not those who only play on console.

I mean with common LOD and displaying such detailled models and making more detailled LOD we could render the same quality while beeing lot more faster as not using Nanite.

Because barely visible surfaces or micro surface volumes really does not need triangles and a normal map is enough.

Cyberpunk does not renders small details as player moves farther, but changing that it could have looked as detailled and using new assets with lot more details.

Anyways, i don’t say Nanite is bad, just players will need to buy again more powerfull graphics cards each time such new tech becomes available.

But i guess this is how it works, there is always graphics jumps, so new hardware until you play on medium or lwo rez only.

About PC demo perhaps i’m wrong, perhaps it will run just fine while keeping this next gen look even on medium PC,as Nanite (and perhaps Lumen) is supposed to auto scale well.

After watching that, I got a lot of uncanny valley. Really quite hard to watch. I see it as some of the potential sorts of graphics and fx that we will see in future games, but its also showing that we are not going to reach photo realism even with that level of graphics unless some other technology is thrown in like maybe that machine learning AI that makes each frame look more photo realistic by changing the color tones and replacing unrealistic objects like foliage with photo real versions.

I agree ,perhaps after full screen effects like Ace tone mapping and others perhaps there will be new ones driven by AI to enchance with more realism and remove issues (but not sure about the cost as this need a huge database and cost processing power).

About the game, the first interactive demo on cars destroying others, the actors faces close to camera did not feel like real actor for me, when not talking that was also too static, while it’s great progress compared to most AAA.

Not sure all companies will go ultra realistic texturs and lighting on all areas of a game.

Naughty Dog said their levels textures and materials was not about realistic tones, but something that is appealing to players.

Anyway, i agree it’s powerfull marketing to show new graphics generation jump.

But i enjoyed lot more Breath of the Wild for example.

When AI and their animations and interactions will become better ?

Why so few or no destruction on levels in most games ? Why no better automation ?

When games will use and state Motion matching as standard ? While getting more and more easy to use or automate.

Graphics always evolves, but all other domains and tools would also need some new technical generation jump.

Just confirmed Matrix assets, tools and code will be released with Unreal 5.

The majority of the performance hit in this is coming from Lumen, not Nanite. If it were using baked lighting instead of dynamic lighting and GI it’d probably be well over 60 fps (possibly if it were using dynamic lighting without Lumen GI it would also get a sizeable bump in framerate).

Yeah, sure. Loading and unloading massive amount of lightmaps on a big city chunk as you drive fast through the streets would definitely be faster. Don’t even mind the fact that baking all those maps for all the heavy unoptimized meshes would be probably near impossible even for epic.

Barely visible surfaces don’t get triangulated with nanite, in fact by default settings smallest triangle can be 1 pixel, you need quite few pixels though to see a bump on surfaces.

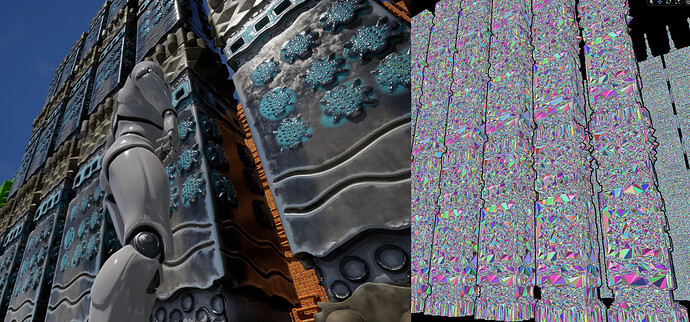

In fact in the matrix demo when you crash into cars then those switch from nanite to regular meshes with lods (since nanite currently can’t handle deforming meshes) and at this point performance gets hit the most. Especially when you try to hit many cars quickly.

With nanite you can build scenes so massive that trying to load fraction of it in blender(or any other dcc) would be impossible and on top of that you still maintain stable fps as it is just resolution dependent.

This video shows that UE5 doesn’t actually need the high end storage found in PS5 or XSX to run properly. It looks like Nanite in general is not bandwidth heavy.

It also goes into why the demo is impressive for those who are comparing the demo to other games.

This what Lighting Scenario levels are for. Pack all the static lighting data into a single, always loaded level’s BuiltData and don’t worry about loading and unloading it piecemeal with the tiles.

How would that look, aside from the fact that you wouldn’t have time of day changing and movable objects wouldn’t blend in as nicely. I wonder who and how would build a detail lighting for all of the city.

There are always some tradeoffs and flaws in static baked lighting and on top of that it is boring and time consuming chore that is at times pain to solve. Not even mentioning iterating on lighting setup is more or less non existent cause no one has the time for lightbaking again and again just for small changes in scene.

I don’t want to ever deal with baked lighting and what it requires (clean uv unwrap sometimes just for that purpose). I don’t want to ever bake a normal map and I don’t ever again want to care about topology.

UE5 is a blessing, losing few fps is nothing compared to the insane amount of time needed to prepare game assets. Not even mentioning that all this new tech allows to easily use UE5 as a renderer for CGI not only for it’s great real-time features but it UE now also has a very fast and fantastic looking pathtracer.

Right, Lumen for sure asks lot of GPU.

And it will need to improve in both performance, support more redering features, because there is some big limitations and terrain for games for example is not supported.

Some Lumen discussion and tips

You’ll still have to do for dynamic objects (characters, vegetation).

The way Nanite render would loose all it’s occlusion and optimization advantages with dynamic objects like characters or vegetation and particles.

Lumen and Nanite won’t work for wild nature games rendering 90% dynamic objects on screen and using trenslucency and particles.

I think there is big opportunity for anyone able to bring fully automated AI app doing uv mapping , normal baking, avoiding any issues.

While LOD should become be real time in engine (for example Doom Eternal), perhaps able to scale as well as Nanite.

Well, i guess Unreal will have to stay hybrid static and dynamic rendering.

Both are not fully compatible and have features missing, there is still lot of work even after release ![]() .

.

Let’s see what other studio will bring, not sure Matrix demo will stay the visual benchmark.

You can get away with a LOT of vegetation and dynamic objects already in UE if you’re not a game dev. needing for heavy optimizations for different hw. So for my purposes UE5 already does already allow me to forget about whole normal and light baking process.

And I’m pretty sure translucency is only matter of time. Once they’ll make something like WPO for nanite you can then also use it for dense vegetation.

In fact the newly added megascan trees already have some pivot based animations that I suspect are going to work with nanite and that’ll pretty much solve the issue there.

One thing that’s left is characters but if 90% of your screen is nanite you can do whatever you want with your characters.

Well maybe for mobile game devs, sure. It might take a year or two before we can completely forget about these horrible optimization practices.

Epic’s already said that terrains will work with Lumen in the production ready UE5 release.

And in Brian Karis’s SIGGRAPH talk that I linked earlier he said that the long term plan is that absolutely everything will be Nanite, but that the initial production ready UE5 release will probably only support non-deforming opaque meshes.

Until dynamic objects is announced on Nanite, you only use it for static meshes.

For indie games (neither movies or archi viz and other stuff), the graphic goal is not hyper realistic neither hyper detailled worlds, neither the budget to hire more people to make awesome super detailled models taking weeks to complete. For those, common textures and medium poly workflow stays the best about content creation cost and performance with lower game storage.

For mobile, a characters made with 10 000 polys will always be cheaper than an original million polys characters compressed, same for GPU usage.

Because behind the scenes Nanite is constantly processing occlusion and calculating the triangles to display, it’s way heavy GPU than doing simple math distance and display correct LOD.

Sure Nanite allows indies to make as realistic generic graphics (common generic characters and outdoors) as many AAA companies without big efforts, that’s a good thing.

While for specific games displaying already high detail and lot of vegetation, not sure Nanite would bring lot more value.

Nanite will only simplify some workflow, it will never replace the huge work of R&D, design, art direction, game balancing and all other things.

Awesome, combined with the new open world manager, making open worlds would have never been so easy.

I guess, Epic should work on voxel terrain based open worlds, they must modernize it, the old surface way should be an option while Voxel the new standard.

Combined with Nanite also replace terrain textures with real tilling meshes, for example floor stones and branches made of real geometry.

Curious to see how other big companies will improve their 3D engine, or if they will rivalize with actual workflow that is possible with higher detailled LOD or new culling features.

I’m 100% in agreement that 3rd character was so annoying; everything else was pretty cool.

Unreal 5 will not be a complete workflow changer.

Outside of simple objects using only tilling textures like rocks for example.

Complex models will need complex UV mapping for painting in Substance Painter or Mari.

It is almost impossible to make UVs seams on 1 or 2 million polygons meshes, because it’s sculpting triangles, no quads, so no edges loop can be detected, doing it yourself would take days.

So again the workflow is increased as you will need to

- reduce polygons

- bake normals to get back original details

- make the UV map to be able to paint PBR textures.

But Nanite advantages remain :

- In game high detail polygons (200 000 polys for example)

- No LOD to make and to bother in game

About vertex coloring, in Zbrush this is just color, it’s not vertex PBR painting on the same object composing a model, and i don’t know any app that is able to do Vertex PBR painting.

Perhaps Epic will bring some solution for Vertex pbr painting or importing that, while this would also increase asset size.

You know, you can have base mesh that has UV’s and then work on sculpting to get it to high res and for texturing you can use medium res. model with UDIMs while the full resolution model is reserved for actual rendering.

How do you think they do it for movie assets. You think those are 10K models or all use seamless tileable textures?

The point is to be able to use cinematic/movie type assets that would normaly be unusable in any other case than at rendertime with some offline renderer.

Baking normal maps and lightmaps is going away as it should. It might still take a while for mobile and VR hardware to catch up, but this ancient wokflow is finally changing.

PS: You can do vertex pbr painting even on an ipad. And you can easily sculpt a mesh with few milion poly there too.

Nanite scalability for low hardware or mobile is not Nanite scaling but common LOD.

When you need your game multiplatform on mobile and desktop.

Less baking, but still baking

There is procedural auto unwrap materials but those will have a high GPU cost compared to textures, depends how much such shaders are used and on level complexity.

Also you loose all ability to paint mesh in Substance Painter for very custom appearence.

How would you apply textures on the high rez sculpted mesh as it is sculpting sor triangles, so no quads and no edges loops ?

Or you mean using a middle resolution mesh, in that case you’ll loose all details of high rez mesh if you don’t bake a normal map.

For complex models , cylinder or cube mapping won’t work.

Lot of materials on lot of sub objects composing a model and tileable texture is a way used in movie characters, some games like Killzone 3 for example and other stuff.

But you need to add details like dust or dirt for example on top of the model using a tileable texture, otherwise it looks too clean that is good for Disney movies for example.

So you need UV to apply your masks, layers and additionnal textures, for example on Mari app for movies or games.

I tried Unreal 5 , and you still need an mid resolution object that is retopo to have good quads so you can UV maped it and use materials and textures.

If you don’t bake the normal map, you’ll loose all details from the millions polygons high rez mesh.

Perhaps someone would make some app that is able to transform high rez model made of million triangles to same mesh made of million quads following mesh topology flow, but this does not exist yet.

While most remesh tools are not build for that and for millions polygons models work.

The advert get your Zbrush model right in Unreal 5 was exagerated as for their first demo, they use baking, UV maps and textures.

I’ll have to dig more about their workflow.

While vertex color is not officially supported yest for Nanite import, i’ll try to explore some tutorials for simple objects shapes like rocks.

Vertex paint only paints color on object that has already a material.

We need Vertex PBR texture, not Vertex colormap only to color a material, while it works for many usages.

Another way is multiple sub objects , each having it’s own PBR material, so you vertex color paint each sub object.

Vertex color paint looses the ability to paint roughness metallic and illumination variations on a same surface like you do using Substance Painter or Mixer for example.

Again i don’t know any app that does Vertex PBR painting.

Workflow i tried is simple using automatic retopology tools with automatic normal map baking in some clicks, then painting model textures.

Nanite performance is amazing, you don’t need LOD and you can use models that won’t have in game obvious retopolgy edges.