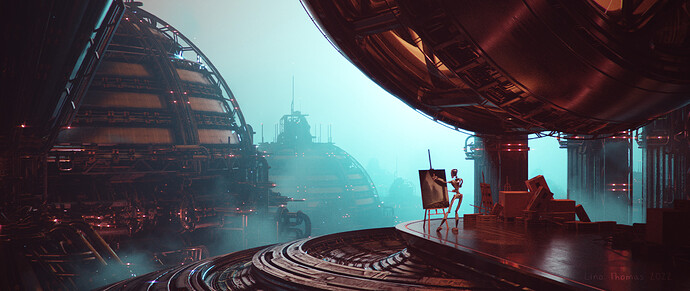

Artstation link… as usual: https://www.artstation.com/artwork/lR2mqa

Why did i ask this question?

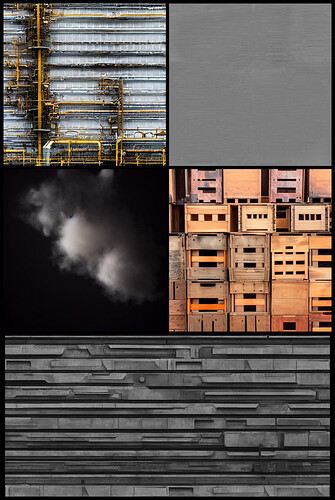

I toyed around with Stable Diffusion, a AI image generating tool and discovered, that it actually creates pretty decent compositions, lets tap into that resource!

As the AI is learning a lot from existing art, i decided to learn from the ai as well.

I found a nice concept art made by this tool (on lexica.art) and wanted to rebuild this in 3D.

Because that would be probably a bit too simple and i wanted a new challenge i decided that all textures must be created by Stable diffusion.

With this limitation i dug deeper into the tool and discovered some ways of getting usable textures out of it (use the prompt “a photograph of a … wall” to get almost ortho images of something).

It was pretty versatile in the images i got out of it. From noisy surface to FX and grunge maps, it seems to be possible to get usable images from it. Pretty nice, as they are also not bound to a license, free to use ![]()

Minor work was put into making the images then tile able in Gimp.

Anyways, the 3D part was done with Blender following my usual greeble workflow.

Foreground Robot was rigged with rigify.

Rendered with Cycles, 1k samples in about 2 minutes on a rtx3060.

Post processing in the Blender compositor.

All textures were made with AI:

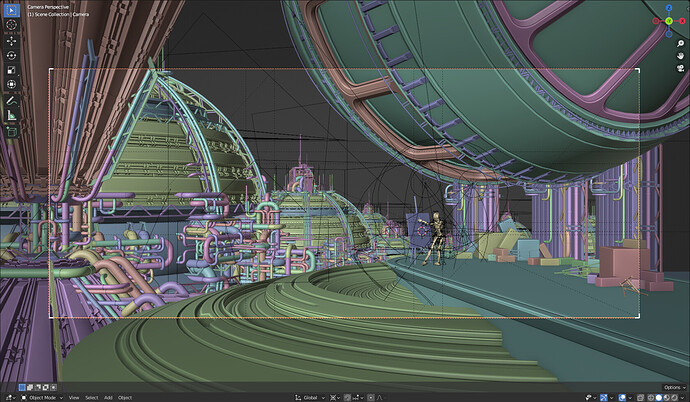

Viewport shot:

And all used greeble bits for creating this environment.