The main difficulty I have encountered with OptiX is its tendency to blur texture details away. I am going to have a closer look at aliasing in future experiment to get a better feel for it.

This denoiser, as well as nVidia’s, is quite awesome! However, I just wish Intel was more forthcoming with information, the lack of which is worrisome for future integration and improvement. Perhaps they’re waiting for Siggraph this year (if not; this entire thing was just their attempt to ride the early wave of Optix hype)

The first issue is that there’s been 0 activity from Intel since mid Spring. Nothing in the denoiser repo, nothing in their DNN math library repo, and their actual training data set has not been updated either.

Intel’s documentation still stating that temporal stability is not supported yet (required for animation) doesn’t leave any good feelings either; regardless of what some folks show as it being relatively ok frame to frame.

Secondly, we still don’t have many details about how the denoiser was trained. How many unique scenes were used? How many unique sample counts for each scene were used? With nVidia we have been given hints in some of their 2017 presentations at least, though specifics are also lacking on their side.

Additionally, while nVidia allows you to train using your own data sets, Intel does not. That could be a blocker for more general purpose adoption.

Both companies have been pretty awful with continually improving their models here as I don’t think the Optix libs and training data sets have been updated either. That’s just all weird.

[EDIT] 2019-07-29: Looks like the OIDN repo’s came back to life today! No doubt due to siggraph. Unfortunate that Intel didn’t fully develop in the open though and instead merged in/un-hid their changes just today.

There is going to be multiples surprises at the siggraph blender boot this year. May oidin be one of them ? There’s also the presence of tangent animation ceo, aren’t they working on that ?

I don’t have surprises or announcements to make, but if you’re going to SIGGRAPH, you can find me at Intel’s Create event and ask me anything about my work integrating OIDN.

Is there addon for Blender with OpenImageDenoiser?

Not an addon, but a build that has a denoise node in the compositor that uses oidn. You can find it on graphicall.

After using the build that has the Intel Denoise in it for quite a while, I REALLY hope someone makes a plugin adding it to 2.8 now that it’s out. It’s become quite the important part of my workflow and I do not have the budget to get an NVidia GPU anytime in the near future, so D-Noise is not an option.

there is a Blender 2.79 build with OIDN integrated as greenorangejuice said. I’m using it for denoising. Pretty handy.

The denoise node based on Intel’s OpenImageDenoise library is specified as ‘very high priority’ in the cycles task list. Very high seems to indicate it should be fixed before next release and the last comment in the branch by Brecht specifies the intention is to complete it for the 2.81 release.

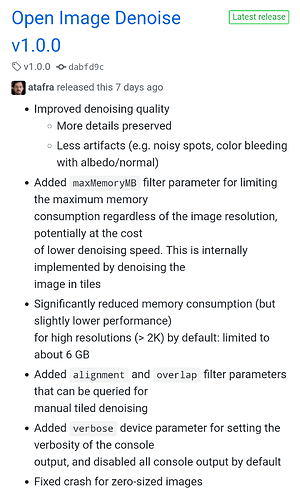

Version 1.0.0. has been released few days ago.

@skw already updated the patch and reading at what Brecht said on the dev forum, OIDN will soon be in master ![]()

mib2berlin will the graphicall version run addons meant for 2.79b and python 3.5? Thanks EDIT: Will it render only on my CPU? My video card is not working.

Hi, if official Blender 2.79b was with Python 3.5 yes, cant remember.

Check out, you have nothing to install, only unzip and run.

Cheers, mib

God damn i CANNOT WAIT to have a viewport denoiser in blender

Indeed. However, I thought the initial OIDN patch that is going in is only the compositor node which would just allow a denoise pass at the end of final rendering. I’m not sure if any patch has been submitted that works with viewport progressive rendering or tile-based rendering like the current denoiser. Anyone have further info?

Yes, the current public patch is running OIDN in the compositor, not the viewport - that’s my patch, and it’s also me running the demo in that video above.

I played with viewport integration since the beginning, but it needs an extremely powerful machine to get to decent frame rates (the SIGGRAPH demo machine was a dual 28 core, giving 112 hardware threads in total). Getting it into the compositor is my first priority, that’s where it will be useful to anyone regardless of hardware. Viewport integration is a bit hacky at the moment, it was done out of curiosity and experimentation to see how far we could take it. It still has its issues and limitations, but when the time comes, I will publish the patch.

Thanks @skw.

Is there a particular reason why it won’t be implemented as a render option, like the Cycles denoiser? I almost never make use of the Compositor.

For the case of baking lots of jobs it would be nice to have oidn denoising as render option, too.

Does anyone has an idea how to use denoising with bakes effective?

So basically real time AI viewport denoising is too demanding for even a good consumer CPU ? So the only solution is GPU denoising ?

My tests were only done with CPU rendering + CPU denoising, where denoising is in a separate thread an happens while rendering. Things might be better with GPU rendering + CPU denoising, which I haven’t tested. I also believe that OIDN takes advantage of AVX-512, so it might be better on current architectures than on my 2013 CPUs. Intel may also be optimizing OIDN’s speed in future version.

It is a pure image->image operation, so the compositor is a natural home for it. This way it’s also useful for users of other render engines or for denoising renders done in earlier Blender builds.

Adding it to Cycles in addition would be a bit strange as it would expose the identical feature twice in different locations. Also, Cycles itself in non-progressive mode only ever keeps individual tiles in memory and never the full frame - OIDN however operates on the full frame. Applying OIDN on each tile separately could lead to visible seams and could require blending operations.